An Atlas of Monetary Risk Measures

This article offers a survey of monetary risk measures on \(L^\infty\) guided by a two-dimensional Atlas. The horizontal dimension tracks increasingly flexible portfolio additivity, from linear to comonotonic additive, coherent, convex, and monetary functionals, and the vertical tracks increasingly restrictive continuity assumptions, from the absence of a Fatou assumption to its presence, to law invariance. The Atlas provides a compact framework for locating familiar examples and for understanding how algebraic structure and topological regularity interact through convex duality. The article adopts the actuarial loss sign convention and a pricing-oriented perspective. Alongside the classical theory, it explains how finitely additive and other non-Fatou examples describe what lies beyond the standard countably additive setting and why the usual regularity assumptions matter for risk management.

risk theory, convex, coherent, duality

Introduction

A famous paper puts “order in risk measures”, that order emerging from characterizing axioms where each axiom scaffolds a tower of mathematical implications Frittelli and Rosazza Gianin (2002). The order is powerful because these implications resonate well with both intuitive notions of risk and the practice of risk management. The scaffold is at once beautiful and useful, but intimidating too as it clads advanced mathematics. Much of that mathematics is taken for granted in academic papers, making the literature hard for the novice. This paper aims to provide just enough mathematical background to make sense of the theory, saving the reader from thousand-page, multi-volume analysis texts.

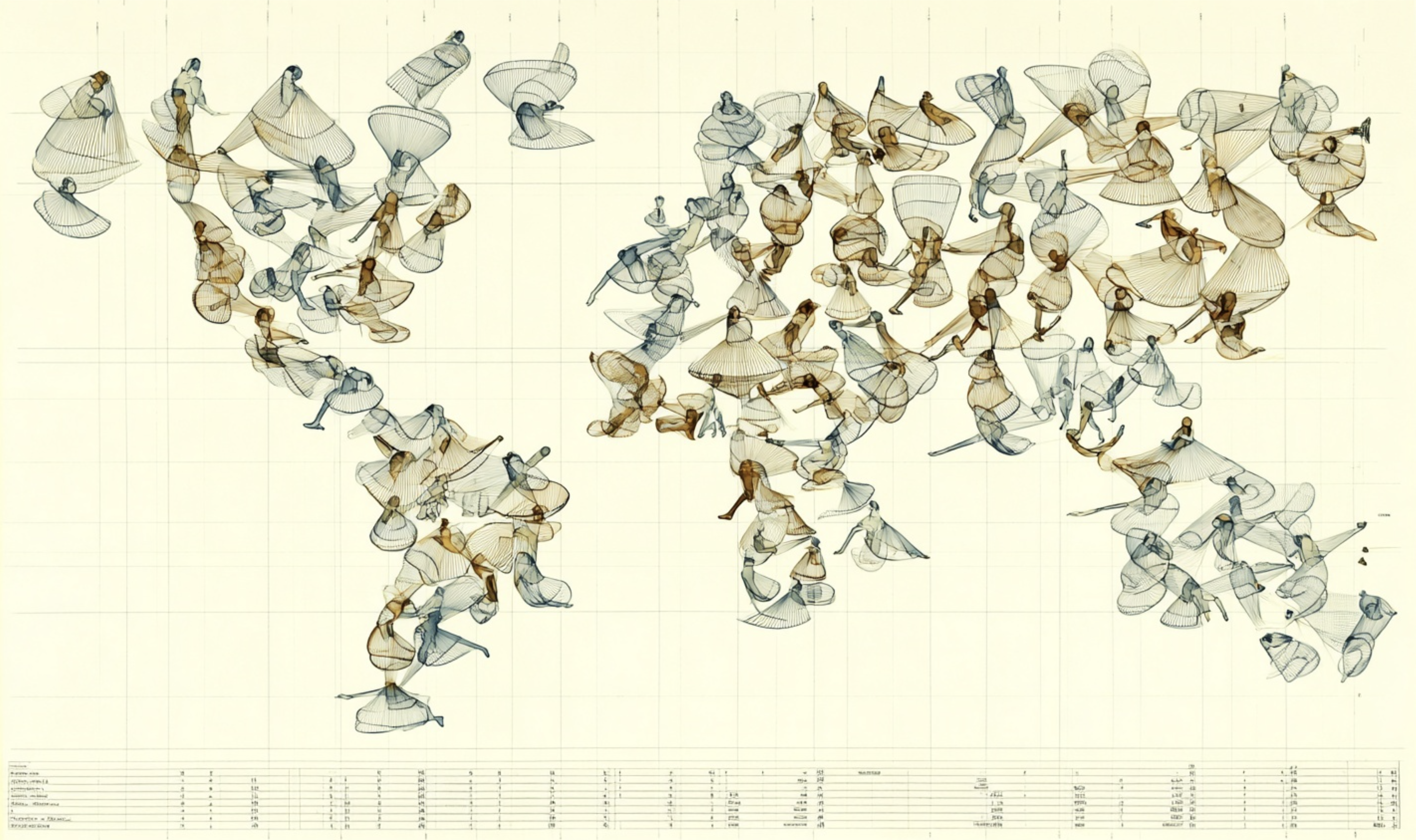

The presentation is arranged around an Atlas of risk measures, Figure 1. Its horizontal dimension tracks increasingly flexible algebraic notions of portfolio additivity, and the vertical increasingly restrictive notions of continuity. This two-dimensional framework allows us to locate any risk measure at the intersection of its economic behavior (diversification) and its mathematical regularity (limit behavior).

All measures in the Atlas are monetary: they share the foundational properties of monotonicity—if option \(B\) is worse than option \(A\) in every outcome, its risk must be higher—and cash invariance, ensuring that risk scales linearly with certain payments. Moving from left to right, we systematically relax the requirement of additivity over portfolios. An additive (linear) measure is the most rigid, where risk is strictly additive and no diversification benefit exists—not a good model for insurance, although additive rules are often used to model prices in efficient financial markets. Comonotonic additive measures relax additivity to hold only for risks that move together. Coherent measures introduce the sine qua non of risk theory: sub-additivity, which formalizes the benefit of diversification and requires that the risk of a pool is no greater than the sum of its parts, independent of position size. Convex measures, a further relaxation, acknowledge that diversification benefits may diminish as position sizes grow, often due to liquidity constraints or concentrations of risk. Finally, monetary measures are the most flexible, where only monotonicity and cash invariance remain, the two basic axioms for all risk functionals we consider.

While the columns define how a risk measure treats portfolio diversification, moving down the rows represents an increase in the measure’s mathematical niceness, or continuity. The top row represents measures in their most general, finitely additive form, operating with an absence of an explicit Fatou continuity assumption—defined below. In the middle row, we impose the Fatou property, a continuity assumption that ensures the risk measure behaves predictably under limits and allows for a dual representation using standard countably additive densities. Lastly, the bottom row imposes law invariance, requiring that the risk measure depends only on the distribution of a random variable, not on the underlying state space. In the convex setting, law invariance has powerful consequences, including the Fatou property. It represents the highest degree of regularity in the Atlas and forces the risk measure to respect several intuitive notions of risk.

An important mathematical idea unifying the Atlas is that of a convex function. Convexity is a mathematical way to express the benefits of diversification and pooling. In mathematics, convex functions are regarded as highly tractable, almost on par with continuous and differentiable functions. They often possess a dual representation based on affine (shifted linear) functions, making them easy to understand, interpret, and use in theory and practice. A central objective of the theory is describing exactly when this dual representation holds. We shall see that there is a subtle interplay between the measure’s algebraic flexibility and its topological continuity that emerges precisely through this dual representation.

Once you set up an axiomatic framework, mathematics tends to push back—“Problems worthy of attack prove their worth by hitting back,” as Piet Hein says. Things you wish true turn out false, while unexpected examples exhibit inconvenient truths. Mathematics pushes back particularly strongly in risk theory, so strongly that we can formulate a spoof rule: “No universal statement about risk is true.” The spoof rule certainly feels true, even though it is logically inconsistent. As we explore the Atlas we will uncover many unwanted implications. One is an interplay between the axioms. The monetary assumption already enforces a lot of continuity. Thus, in a sense convex duality is already lurking in the left four columns—the right-most lacks convexity. But continuity depends on topology, a notion of nearness, and that can be defined in many ways. The difference between the top two rows comes down to different topologies, and it is quite subtle. It leads to weird examples that are usually assumed away. We include them here to show why they are assumed away. Once you meet them, you will know.

This article has a different aim from comprehensive treatments such as the excellent book Föllmer and Schied (2016). The Atlas is intended as a tour guide rather than a comprehensive treatment. We include more background theory, and cover only a subset of the material in Föllmer and Schied Chapter 4, “Monetary measures of risk.” We use the actuarial loss sign convention throughout, and take a pricing-oriented viewpoint rather than organizing the subject around acceptance sets or capital requirements. We also pay more attention to the edge of the map: not only the classical convex, coherent, and law-invariant cases, but also the stranger objects that lie beyond them, and the assumptions that rule them out. Finally, we make no explicit mention of utility and preference theory (their Chapter 2), instead keeping the focus on risk functionals useful in insurance and the relationships among them.

We are now a generation removed from the coherent revolution initiated by Artzner et al. (1999). The excitement of the early papers, and the rapid development of convex duality methods for risk measures in the early 2000s, are still palpable when reading that literature today. Yet the subject has now become part of the foundation of the field, and every new researcher must come to grips with it. That is not an easy task. This survey article aims to help the next generation. It focuses on common points of difficulty or confusion (at least in the author’s experience), and is offered in the hope that it will be useful to students and researchers as they learn the theory.

With that introduction and motivation, we turn to the contents. There are seven sections. First, we define the axioms and terms that we use to signpost the Atlas, Section 1. Then, Section 2 lays out some basic results that follow easily from them. Section 3 explains the fundamental concept of convex duality. We then state and sketch, in Section 4, four deeper results in the theory: Schmeidler’s characterization of comonotonic additive functionals (second column), two implications of law invariance (bottom row), that it implies the Fatou property and that it respects second-order stochastic dominance, and finally Kusuoka’s representation of law-invariant convex risk measures, which explains why the law-invariant convex region is generated from Tail Value at Risk and clarifies the special role of distortion measures. Section 5 lays out examples in each region of the Atlas, Section 6 offers thoughts on three miscellaneous topics: risk vs. value measures, acceptance sets, and pricing vs. regulation. Section 7 provides with a very brief literature review. Section 8 covers deeper background material and proofs of two technical results. Finally, Section 9 reprises everything we have covered.

1 Definitions

We work on \(L^\infty=L^\infty(\Omega,\mathscr F,\mathsf P)\), the space of essentially bounded random variables modulo almost sure equality. We use the loss sign convention: larger values of \(X\) are worse.

A risk functional is a map \[ \rho:L^\infty \to \mathbb R. \]

For \(X,Y\in L^\infty\) and \(m\in\mathbb R\), we make the following definitions.

Monotone: \(X\le Y\) a.s. implies \(\rho(X)\le \rho(Y)\).

Cash invariant: \(\rho(X+m)=\rho(X)+m\).

Monetary: monotone and cash invariant.

Normalized: \(\rho(0)=0\).

Convex: \(\rho(\lambda X+(1-\lambda)Y)\le \lambda\rho(X)+(1-\lambda)\rho(Y)\), for \(0\le \lambda\le 1\).

Positively homogeneous: \(\rho(\lambda X)=\lambda \rho(X)\), for \(\lambda\ge 0\). Positively homogeneous implies normalized since \(\rho(0)=\rho(0\cdot 0)=0\rho(0) =0\).

Subadditive: \(\rho(X+Y)\le \rho(X)+\rho(Y)\).

Convex risk measure: monetary and convex.

Coherent risk measure: monetary, convex, and positively homogeneous; equivalently, monetary, subadditive, and positively homogeneous by the 2/3 lemma below.

Comonotone: \(X\) and \(Y\) are comonotone if \[ (X(\omega)-X(\omega'))(Y(\omega)-Y(\omega'))\ge 0 \] for all \(\omega,\omega'\) after choosing representatives. Equivalently, \(X=f(Z)\) and \(Y=g(Z)\) a.s. for some random variable \(Z\) and some increasing functions \(f,g\), Proposition 2. In fact, we can take \(Z=X+Y\).

Comonotonic additive: if \(X,Y\) are comonotone then \[ \rho(X+Y)=\rho(X)+\rho(Y). \]

Law invariant: if \(X\) and \(Y\) have the same distribution (\(X\stackrel{d}=Y\)) then \(\rho(X)=\rho(Y)\).

Fatou property: if \(X_n\in L^\infty\), \(\sup_n \|X_n\|_\infty<\infty\), and \(X_n\to X\) a.s., then \[ \rho(X)\le \liminf_{n\to\infty}\rho(X_n). \] The Fatou property guarantees that the limit position of a sequence of acceptable positions remains acceptable because risk does not jump up unexpectedly.

Remark 1. Cash invariance is one of the most useful, and most revealing, axioms in risk theory. It looks innocent and seems reasonable, but it has major consequences. It says that risk is measured on a linear cash scale: a certain dollar is a certain dollar, no matter what other wealth is present. The axiom therefore separates risk from wealth. That separation is mathematically powerful and is one of the reasons the theory of monetary risk measures is so tractable. The existence of risk-free cash is also implicit.

The cash invariance axiom marks a genuine fork in the road: it is not the way expected utility behaves. Under diminishing marginal utility, a dollar matters more when wealth is low than when it is high. Cash invariance rules that out. For a commercial enterprise, or a trading desk, one often wants to measure the risk of a position itself, not the welfare of the ultimate owners after combining the position with all their outside wealth, and we should separate risk from wealth in that setting.

2 Basic Results

Remark 3. Cash invariance implies \[ \rho(m)=\rho(0+m)=\rho(0)+m,\quad\forall m\in\mathbb R, \] and therefore if \(\rho\) is normalized \[ \rho(m) = m \qquad\text{for all }m\in\mathbb R, \] consistent with the idea that cash is riskless. For a normalized risk measure, cash invariance is equivalent to cash additivity: \(\rho(X+m)=\rho(X)+\rho(m)\).

For most purposes in the monetary setting, it is no loss of generality to assume \(\rho\) is normalized. In the few cases it is needed, we assume it explicitly.

The next proposition shows that monetary risk functionals are automatically \(L^\infty\)-Lipschitz continuous.

Proposition 1 If \(\rho\) is monetary, then for all \(X,Y\in L^\infty\), \[ |\rho(X)-\rho(Y)|\le \|X-Y\|_\infty. \]

Proof. Let \[ \delta:=\|X-Y\|_\infty. \] Then \[ Y-\delta \le X \le Y+\delta \qquad\text{a.s.} \] By monotonicity, \[ \rho(Y-\delta)\le \rho(X)\le \rho(Y+\delta). \] By cash invariance, \[ \rho(Y-\delta)=\rho(Y)-\delta, \qquad \rho(Y+\delta)=\rho(Y)+\delta. \] Hence \[ \rho(Y)-\delta\le \rho(X)\le \rho(Y)+\delta, \] which is equivalent to \[ |\rho(X)-\rho(Y)|\le \delta=\|X-Y\|_\infty. \]

Lemma 1 (2/3 lemma) Let \(\rho\) be monetary and normalized. Any two of the following three properties imply the third:

- convexity,

- positive homogeneity,

- subadditivity.

Proof. Convexity and positive homogeneity implies subadditivity. For any \(X,Y\), \[ \rho(X+Y) = 2\rho\!\left(\frac{X+Y}{2}\right) \le 2\left(\frac{\rho(X)+\rho(Y)}{2}\right) = \rho(X)+\rho(Y). \]

Subadditivity and positive homogeneity implies convexity. For \(0\le \lambda\le 1\), \[ \rho(\lambda X+(1-\lambda)Y) \le \rho(\lambda X)+\rho((1-\lambda)Y) = \lambda \rho(X)+(1-\lambda)\rho(Y). \]

Convexity and subadditivity implies positive homogeneity. First, convexity with \(Y=0\) and normalization gives, for \(0\le \lambda\le 1\), \[ \rho(\lambda X) = \rho(\lambda X+(1-\lambda)0) \le \lambda \rho(X). \] Next, for \(\lambda\ge 1\), write \[ X=\frac1\lambda (\lambda X)+\left(1-\frac1\lambda\right)0. \] Convexity gives \[ \rho(X)\le \frac1\lambda \rho(\lambda X), \] so \[ \rho(\lambda X)\ge \lambda \rho(X). \] On the other hand, subadditivity gives for integers \(n\ge 1\), \[ \rho(nX)\le n\rho(X). \] Hence for rational \(\lambda=m/n\ge 1\), \[ n\,\rho\!\left(\frac mn X\right) \ge \rho(mX) \quad\text{by subadditivity,} \] and therefore \[ \rho\!\left(\frac mn X\right)\le \frac mn \rho(X). \] Combined with the reverse inequality from convexity, this yields \[ \rho(\lambda X)=\lambda \rho(X) \qquad\text{for rational }\lambda\ge 1. \] The same argument for \(0\le \lambda\le 1\) gives equality there too. Since a monetary functional is Lipschitz, \(\lambda\mapsto \rho(\lambda X)\) is continuous, so the rational identity extends to all \(\lambda\ge 0\).

Lemma 2 Let \(\rho\) be convex, normalized, and monetary. Then for every \(X\in L^\infty\):

- If \(0\le \lambda\le 1\), then \[ \rho(\lambda X)\le \lambda \rho(X); \]

- If \(\lambda\ge 1\), then \[ \rho(\lambda X)\ge \lambda \rho(X). \]

Proof. For \(0\le \lambda\le 1\), convexity and normalization give \[ \rho(\lambda X) = \rho(\lambda X+(1-\lambda)0) \le \lambda \rho(X)+(1-\lambda)\rho(0) = \lambda \rho(X). \]

Now let \(\lambda\ge 1\). Then \[ X=\frac1\lambda (\lambda X)+\left(1-\frac1\lambda\right)0. \] Applying convexity again, \[ \rho(X) \le \frac1\lambda \rho(\lambda X)+\left(1-\frac1\lambda\right)\rho(0) = \frac1\lambda \rho(\lambda X). \] Multiplying by \(\lambda\) gives \[ \lambda \rho(X)\le \rho(\lambda X). \]

Remark 4. Lemma 2 shows the scaling signature of convexity. Small positions are sub-linearly risky, so splitting or reducing a position weakly lowers the capital requirement per unit. Large positions are super-linearly risky, so scaling up is discouraged. In liquidity language, convex risk measures penalize concentration. Small trades are weakly cheaper per unit than large trades, reflecting the idea that liquidity worsens as position size increases.

Next, we present some basic results about comonotonicity.

Remark 5. Every random variable is comonotone with every constant. Indeed, if \(Y \equiv c\) a.s., then for all \(\omega,\omega'\), \[ (X(\omega)-X(\omega'))(Y(\omega)-Y(\omega')) = (X(\omega)-X(\omega')) \cdot 0 = 0 \ge 0, \] and hence \(X\) and \(c\) are comonotone.

Comonotonic additivity gives a translation formula.

Lemma 3 Assume \(\rho\) is comonotonic additive. Then for every constant \(m\), \[ \rho(X+m)=\rho(X)+\rho(m). \]

Proof. By Remark 5, \(X\) and \(m\) are comonotone.

Remark 6. To get cash invariance from Lemma 3 we still need \[ \rho(m)=m \qquad\text{for constants }m, \] which follows if \(\rho\) is normalized, but it can fail without.

The next lemma shows that monetary, comonotonic additive functionals are positive homogeneous.

Lemma 4 Assume \(\rho\) is monetary and comonotonic additive. Then \(\rho\) is positively homogeneous.

Proof. The first step proves integer homogeneity holds. Any random variable is comonotone with positive multiples of itself, thus for \(n\in\mathbb N\) \[ \rho(nX)=\rho(X+\cdots+X)=n\rho(X). \]

The second step extends to rational homogeneity. Let \(\lambda=m/n\in \mathbb Q_+\) with \(m,n\in\mathbb N\). Then \[ n\,\rho\!\left(\frac mn X\right) = \rho\!\left(n\frac mn X\right) = \rho(mX) = m\rho(X), \] so \[ \rho(\lambda X)=\lambda \rho(X). \]

The last step extends from rationals to all \(\lambda\ge 0\). Because \(\rho\) is monetary, it is \(L^\infty\)-Lipschitz. Hence \[ |\rho(\lambda X)-\rho(\mu X)| \le \|(\lambda-\mu)X\|_\infty = |\lambda-\mu|\,\|X\|_\infty, \] showing that \(\lambda\mapsto \rho(\lambda X)\) is continuous on \([0,\infty)\). Since homogeneity holds for all nonnegative rationals, it holds for all \(\lambda\ge 0\) because \(\mathbb Q\) is dense in \(\mathbb R\).

Corollary 1 Every monetary comonotonic additive convex risk measure is coherent.

Proof. Lemma 4 gives positive homogeneity. Since constants are comonotone, \(rho(m)=\rho(0+m)=\rho(0)+\rho(m)\) and so \(\rho(0)=0\) and \(\rho\) is normalized. Thus we can apply the 2/3 lemma to turn convexity into subadditivity.

Proposition 2 Let \(X,Y \in L^\infty\). The following are equivalent.

- \(X\) and \(Y\) are comonotone.

- There exist a random variable \(Z\) and increasing functions \(f,g:\mathbb R\to\mathbb R\) such that \[ X = f(Z), \qquad Y = g(Z) \qquad\text{a.s.} \]

Proof. The implication \((2)\Rightarrow(1)\) is immediate: if \(X=f(Z)\) and \(Y=g(Z)\) with \(f,g\) increasing, then for all \(\omega,\omega'\), \[ (X(\omega)-X(\omega'))(Y(\omega)-Y(\omega')) = (f(Z(\omega))-f(Z(\omega')))(g(Z(\omega))-g(Z(\omega'))) \ge 0, \] because the two factors always have the same sign.

For \((1)\Rightarrow(2)\), we can take \[ Z:=X+Y, \] and show that \(X\) and \(Y\) are increasing functions of \(Z\). Assume \(X\) and \(Y\) are comonotone, and suppose \[ Z(\omega)\le Z(\omega'). \] We claim this implies \[ X(\omega)\le X(\omega') \qquad\text{and}\qquad Y(\omega)\le Y(\omega'). \] For if, say, \(X(\omega)>X(\omega')\), then comonotonicity forces \(Y(\omega)\ge Y(\omega')\). But, then \[ Z(\omega)=X(\omega)+Y(\omega)>X(\omega')+Y(\omega')=Z(\omega'), \] a contradiction. Hence \(X(\omega)\le X(\omega')\). The same argument gives \(Y(\omega)\le Y(\omega')\). Thus both \(X\) and \(Y\) are order-preserving functions of \(Z\): whenever \(Z(\omega)\le Z(\omega')\), one has \(X(\omega)\le X(\omega')\) and \(Y(\omega)\le Y(\omega')\). Therefore one may define increasing functions \(f\) and \(g\) on the range of \(Z\) by \[ f(Z(\omega)):=X(\omega), \qquad g(Z(\omega)):=Y(\omega), \] and extend them monotonically to all of \(\mathbb R\). Then \[ X=f(Z), \qquad Y=g(Z) \qquad\text{a.s.} \]

Lemma 5 (Denneberg (1994)) Let \(X_1,\dots,X_n \in L^\infty\) be pairwise comonotone. Then there exist a random variable \(Z\) and increasing functions \(f_1,\dots,f_n\) such that \[ X_i = f_i(Z) \qquad\text{a.s. for }i=1,\dots,n. \]

Consequently, if \(\rho\) is comonotonic additive, then \[ \rho\!\left(\sum_{i=1}^n X_i\right)=\sum_{i=1}^n \rho(X_i). \]

Proof. The first statement is the finite-family extension of Proposition 2: if \(X_1,\dots,X_n\) are pairwise comonotone, then with \[ Z:=X_1+\cdots+X_n \] each \(X_i\) is an increasing function of \(Z\).

For the second statement, the representation \[ X_i=f_i(Z), \qquad i=1,\dots,n, \] shows that every partial sum \[ S_k:=X_1+\cdots+X_k \] is also an increasing function of \(Z\). Hence \(S_{k-1}\) and \(X_k\) are comonotone for each \(k\), and comonotonic additivity gives \[ \rho(S_k)=\rho(S_{k-1})+\rho(X_k). \] Induction on \(k\) yields \[ \rho\!\left(\sum_{i=1}^n X_i\right)=\sum_{i=1}^n \rho(X_i). \]

Remark 7. Denneberg’s lemma is useful because it reduces a comonotone family to a one-factor picture: all variables move in step with the same underlying state variable \(Z\). It explains why Choquet functionals are additive on comonotone sums. For allocations \(X=\xi_0+\xi_1\), it shows there is an equivalence between allocations that increase with \(X\) and allocations where \(\xi_i\) are comonotone.

Figure 2 summarizes the results presented in this section. In the figure, properties defining coherent and convex risk measures are in grey. A solid line indicates a definition, for example, a monetary risk measure is monotone and translation invariant. A cell is defined by all solid lines emerging from it, reading the arrows as implies. Dashed lines indicate derived results, for example, positive homogeneous implies normalized. Respecting second order stochastic dominance implies a positive loading (lower right).

3 Convex Duality

We now summarize the facts about convex duality needed for the Atlas. The material is standard, but the risk measure specialization is so central that it is worth showing in some detail. The theory exhibits the beauty of mathematics: abstract convex duality determines a representation of risk measures as the worst outcome over a set of scenarios, with each suitably scenario handicapped, a representation that comports with an intuitive rational, and a regulator’s or risk manager’s approach to risk quantification. We start with the general theory, then specialize to \(L^\infty\) and the coherent case, and end with a geometric discussion.

Convex duality rests on two assumptions: convexity and lower semicontinuity. In finite dimensions, convexity looks like the senior partner. A real-valued convex function on \(\mathbb{R}^n\) is automatically continuous on the interior of its effective domain, so any failure of lower semicontinuity can occur only at the boundary, which is often a relatively small and peripheral exceptional set. The lower semicontinuity assumption appears almost pedantic, at most boundary housekeeping. In the infinite-dimensional settings relevant for monetary risk measures that picture changes completely, as we see in this section. For functionals \(L^\infty\), with the dual-pair topology coming from \(L^1\), the ambient topology is much less forgiving: the interior may disappear, the boundary no longer a thin shell around a well-behaved core but everywhere at once. Lower semicontinuity stands as an equal partner with convexity, not a technical add-on, and is precisely the condition ensuring that the biconjugate recovers the original functional rather than its lower-semicontinuous envelope.

3.1 General Theory

To keep the discussion reasonably concrete, let \(X\) be a Banach space and let \(X'\) denote a subspace of its algebraic dual that separates points. The topology on \(X\) is taken to be the weakest topology making all \(x'\in X'\) continuous, denoted \[ \sigma(X,X'). \] Then, the topological dual of \((X, \sigma(X,X'))\) is precisely \(X'\). The weak topology is generally much weaker than the norm topology: viewing \(X\) inside the product space \(\mathbb R^{X'}\), the weak topology uses coordinate-wise convergence, whereas norm topology demands uniform control over the dual unit ball. The pairing between \(X\) and \(X'\) is written \[ \langle x,\mu\rangle = \mu(x). \] See Section 8.3 for a more about dual pairs.

Definition 1 Let \[ f:X\to \mathbb R\cup\{+\infty\}. \] The function \(f\) is proper if \(f(x)<\infty\) for some \(x\). The convex conjugate of a proper function is \[ f^*(\mu):=\sup_{x\in X}\ \mu(x)-f(x), \qquad \mu\in X', \] and the biconjugate is \[ f^{**}(x):=\sup_{\mu\in X'}\ \mu(x)-f^*(\mu), \qquad x\in X. \]

Definition 2 Let \(X\) be a topological space, and let \(f:X\to(-\infty,\infty]\). Then \(f\) is lower semicontinuous, abbreviated lsc, if for every \(c\in\mathbb R\) the sub-level set \[ \{x\in X: f(x)\le c\} \] is closed. Equivalently, \(f\) is lower semicontinuous if for every \(c\in\mathbb R\) the strict super-level set \(\{x\in X:f(x)>c\}\) is open.

A lower semicontinuous function may jump downward, but it may not jump upward. In risk management, that is exactly the direction one wants: if a sequence of positions converges to a limit, we do not want the limiting position to have mysteriously greater risk than the approximating positions indicate. The definition depends on the topology because it is expressed in terms of closed sets. Convergence of positions is relative to the same topology.

Remark 8. For any proper \(f\), the function \(f^*\) is convex (it is the sup of affine functions and affine functions are convex) and \(\sigma(X',X)\)-lower semicontinuous (affine functions are lsc and sup of lsc functions is lsc), and \(f^{**}\) is convex and \(\sigma(X,X')\)-lower semicontinuous.

It is always true that \(f^{**}\le f\). Lower semicontinuity is the extra ingredient to ensure equality. The precise statement is:

Theorem 1 (Fenchel–Moreau convex duality) Let \((X,X')\) be a dual pair, and equip \(X\) with the topology \(\sigma(X,X')\). Let \[ f:X\to(-\infty,\infty] \] be proper. Then the biconjugate \(f^{**}\) is always convex and \(\sigma(X,X')\)-lower semicontinuous, and \[ f^{**}\le f. \] If, in addition, \(f\) is convex and \(\sigma(X,X')\)-lower semicontinuous, then \[ f^{**}=f. \]

Proof. For each fixed \(y\in X'\), the function \[ x \mapsto \langle x,y\rangle - f^*(y) \] is affine and \(\sigma(X,X')\)-continuous, hence convex and lower semicontinuous. Since \(f^{**}\) is the supremum of these affine functions, it follows that \(f^{**}\) is convex and \(\sigma(X,X')\)-lower semicontinuous.

Next we show \(f^{**}\le f\). For any \(x\in X\) and \(y\in X'\), \[ f^*(y)=\sup_{u\in X}\ \langle u,y\rangle-f(u) \ge \langle x,y\rangle-f(x), \] so \[ \langle x,y\rangle-f^*(y)\le f(x). \] Taking the supremum over \(y\in X'\) gives \[ f^{**}(x)\le f(x). \]

Now assume \(f\) is convex and \(\sigma(X,X')\)-lower semicontinuous, and fix \(x_0\in X\). To prove the reverse inequality \(f(x_0)\le f^{**}(x_0)\), let \(r<f(x_0)\). Then \[ (x_0,r)\notin \operatorname{epi}(f), \qquad \operatorname{epi}(f):=\{(x,t)\in X\times\mathbb R:t\ge f(x)\}. \] Since \(f\) is convex, \(\operatorname{epi}(f)\) is convex; since \(f\) is \(\sigma(X,X')\)-lower semicontinuous, \(\operatorname{epi}(f)\) is closed in \(X\times\mathbb R\) for the product topology \(\sigma(X,X')\times\) the usual topology on \(\mathbb R\). Hence, by the separating hyperplane theorem, there exist \((a,b)\in (X\times\mathbb R)' = X'\times\mathbb R\), with \((a,b)\ne (0,0)\), and \(\alpha\in\mathbb R\) such that \[ \langle a,x\rangle+bt\ge \alpha \quad\text{for all }(x,t)\in\operatorname{epi}(f), \] while \[ \langle a,x_0\rangle+br<\alpha. \] One must have \(b>0\): \(b<0\) is impossible because the epigraph is upward closed in the \(t\)-direction, and \(b=0\) contradicts the fact that \((x_0,f(x_0))\in\operatorname{epi}(f)\).

Set \[ y=-a/b, \qquad c=\alpha/b. \] Then the separation inequality becomes \[ f(x)\ge \langle x,y\rangle+c \quad\text{for all }x\in X, \] and the strict inequality at \((x_0,r)\) becomes \[ r<\langle x_0,y\rangle+c. \] The first display implies \[ \langle x,y\rangle-f(x)\le -c \quad\text{for all }x\in X, \] hence \[ f^*(y)\le -c. \] Therefore \[ \langle x_0,y\rangle-f^*(y)\ge \langle x_0,y\rangle+c>r, \] so by definition of \(f^{**}\), \[ f^{**}(x_0)\ge r. \] Since this holds for every \(r<f(x_0)\), we obtain \[ f^{**}(x_0)\ge f(x_0). \] Combined with \(f^{**}(x_0)\le f(x_0)\), this gives \[ f^{**}(x_0)=f(x_0). \] As \(x_0\) is arbitrary, \(f^{**}=f\).

Convexity is used in the proof to ensure \(\operatorname{epi}(f)\) is convex and lsc to ensure it is closed; together these allow application of the hyperplane separation theorem.

Remark 9. Lower semicontinuity needed for the biconjugate formula is exactly lower semicontinuity with respect to the topology induced by the chosen dual pair. In the risk-measure application below, the relevant dual pair is \[ (L^\infty,L^1), \] so one needs \(\sigma(L^\infty,L^1)\)-lower semicontinuity.

Definition 3 Let \(f:X\to \mathbb R\cup\set{+\infty}\) be proper and convex. The subdifferential of \(f\) at \(x\in X\) is \[ \partial f(x) := \set{\mu\in X' : f(y)\ge f(x)+\mu(y-x)\ \text{for all }y\in X}. \]

By definition, \[ \mu\in\partial f(x) \iff f(y)\ge \mu(y)-(\mu(x)-f(x)) \ \text{for all }y, \] and therefore \(\mu\in\partial f(x)\) means that \(\mu\) defines a supporting affine functional to the epigraph of \(f\) at \(x\).

Proposition 3 (Fenchel-Young inequality) For every \(x\in X\) and \(\mu\in X'\), \[ f(x)+f^*(\mu)\ge \mu(x). \]

Proof. By its definition as a sup, \(f^*(\mu)\ge \mu(x)-f(x)\), which rearranges to give the result.

Proposition 4 Let \(f:X\to \mathbb R\cup\{+\infty\}\) be proper and convex. For \(x\in X\) and \(\mu\in X'\) the following are equivalent:

- \(\mu\in \partial f(x)\);

- \(x\in \partial f^*(\mu)\);

- Fenchel–Young’s inequality is an equality: \(f(x)+f^*(\mu)=\mu(x)\).

Proof. We show \((1)\iff(3)\iff(2)\).

Assume \(\mu\in\partial f(x)\). Then for all \(y\in X\), \[ f(y)\ge f(x)+\mu(y-x), \] so \[ \mu(y)-f(y)\le \mu(x)-f(x). \] Taking the supremum over \(y\) gives \[ f^*(\mu)\le \mu(x)-f(x). \] Fenchel–Young gives the reverse inequality and so we obtain equality \(f(x)+f^*(\mu)=\mu(x)\). Thus \((1)\Rightarrow(3)\).

Conversely, if \[ f(x)+f^*(\mu)=\mu(x), \] then for every \(y\in X\), \[ \mu(y)-f(y)\le f^*(\mu)=\mu(x)-f(x), \] hence \[ f(y)\ge f(x)+\mu(y-x), \] and so \(\mu\in\partial f(x)\). Thus \((3)\Rightarrow(1)\).

Now apply to \(f^*\) in place of \(f\). Since \[ f(x)+f^*(\mu)=\mu(x) \] is symmetric in \(f\) and \(f^*\), condition (3) is equivalent to \[ x\in\partial f^*(\mu). \] Hence \((2)\iff(3)\).

Remark 10. The equivalences in Proposition 4, \[ \mu\in\partial f(x) \iff x\in\partial f^*(\mu) \iff f(x)+f^*(\mu)=\mu(x), \] are a simultaneous optimality relation: \(x\) is optimal for the supremum defining \(f^*(\mu)\), and \(\mu\) is optimal for the supremum defining \(f^{**}(x)\).

3.2 Specialization to risk measures on \(L^\infty\)

We now return to a convex monetary risk measure \(\rho:L^\infty\to\mathbb R\). We use the dual pair \((L^\infty,L^1)\), with pairing \(\langle X,Z\rangle := \mathsf P(XZ)\). This is the countably additive dual pair used for Fatou-type representations; it is not the full Banach dual of \(L^\infty\).

At first sight the dual variable in the representation of a convex risk measure appears as an arbitrary element \(Z \in L^1\). Later, Proposition 5 shows that whenever \(\rho^*(Z)<\infty\), the variable \(Z\) is in fact the Radon–Nikodym derivative of a countably additive probability measure \(\mathsf Q \ll \mathsf P\), so that \(Z=d\mathsf Q/d\mathsf P\). Thus the pairing can be written equivalently as \[ \mathsf{P}(XZ) = \mathsf{Q}(X)=\int X\,d\mathsf Q \] We therefore move freely between the density notation \(Z\) and the measure notation \(Q\), using whichever is more intuitive in context.

Once the dual variables are read as probability measures \(\mathsf Q \ll \mathsf P\), the dual representation acquires a natural risk-management interpretation. A risk measure evaluates a position by examining its expected loss under a family of alternative probability measures, and then taking the worst penalized value. Each such measure \(Q\) is a scenario: not necessarily a single deterministic event, but a way the baseline model \(P\) is tilted toward more adverse outcomes. In that sense, the dual representation expresses risk measurement as systematic stress testing across a family of scenarios.

Definition 4 The convex conjugate of \(\rho\) is \[ \rho^*(Z):=\sup_{X\in L^\infty} \mathsf P(XZ)-\rho(X), \qquad Z\in L^1. \] If \(\rho\) is \(\sigma(L^\infty,L^1)\)-lower semicontinuous, then Fenchel–Moreau gives \[ \rho(X) = \sup_{Z\in L^1} \mathsf P(XZ)-\rho^*(Z). \]

Proposition 5 links properties of \(\rho\) with those of the penalty function \(\alpha\) used to define it. It starts with an arbitrary \(\alpha\) on the dual space and defines \(\rho=\alpha^*\) on the primal space. Then \[ \rho^*=\alpha^{**}, \] and therefore \(\rho^*=\alpha\) exactly when \(\alpha\) is convex and lower semicontinuous in the relevant dual topology.

Proposition 5 Let \(\alpha:ba(\Omega,\mathscr F)\to(-\infty,\infty]\) be any function, and define \[ \rho(X):=\sup_{\nu\in ba}\, \langle X,\nu\rangle-\alpha(\nu), \qquad X\in L^\infty(\Omega,\mathscr F, \mathsf P), \] where \(\langle X,\nu\rangle=\int X\,d\nu\). Assume \(\rho(X)<\infty\) for all \(X\in L^\infty\).

Then the following hold.

\(\rho\) is cash invariant if and only if \[ \operatorname{dom}\alpha\subseteq \set{\nu\in ba:\nu(\Omega)=1}. \]

\(\rho\) is monotone if and only if \[ \operatorname{dom}\alpha\subseteq \set{\nu\in ba:\nu \text{ is positive}}. \]

\(\rho\) is normalized (\(\rho(0)=0\)) if and only if \[ \inf_{\nu\in ba}\alpha(\nu)=0. \] Equivalently, \[ \inf_{\nu\in\operatorname{dom}\alpha}\alpha(\nu)=0. \]

If \(\rho\) is well defined on \(L^\infty(\Omega,\mathcal F,P)\), equivalently it depends only on \(P\)-a.s. equivalence classes, then \[ \operatorname{dom}\alpha\subseteq \set{\nu\in ba:\nu\ll \mathsf P}. \]

Proof. For (1), let \(m\in\mathbb R\). Then \[ \begin{aligned} \rho(X+m) &=\sup_{\nu\in ba}\, \langle X+m,\nu\rangle-\alpha(\nu) \\ &=\sup_{\nu\in ba}\, \langle X,\nu\rangle+m\nu(\Omega)-\alpha(\nu). \end{aligned} \] If every \(\nu\) in \(\operatorname{dom}\alpha\) satisfies \(\nu(\Omega)=1\), then \[ \rho(X+m) =\sup_{\nu\in ba}\, \langle X,\nu\rangle+m-\alpha(\nu) =\rho(X)+m, \] so \(\rho\) is cash invariant.

Conversely, assume \(\rho\) is cash invariant, and let \(\nu\in\operatorname{dom}\alpha\). For every \(X\in L^\infty\) and every \(m\in\mathbb R\), \[ \rho(X+m)\ge \langle X,\nu\rangle+m\nu(\Omega)-\alpha(\nu). \] Since \(\rho(X+m)=\rho(X)+m\), we get for all \(m\) \[ \rho(X) \ge \langle X,\nu\rangle + m(\nu(\Omega)-1) - \alpha(\nu). \] If \(\nu(\Omega)\ne 1\), choosing \(m\to\pm\infty\) with the appropriate sign gives a contradiction. Hence \(\nu(\Omega)=1\).

For (2), assume first that every \(\nu\) in \(\operatorname{dom}\alpha\) is positive. If \(X\le Y\) almost surely, then \[ \langle X,\nu\rangle\le \langle Y,\nu\rangle \] for every such \(\nu\), hence \[ \rho(X)\le \rho(Y). \] So \(\rho\) is monotone.

Conversely, assume \(\rho\) is monotone, and let \(\nu\in\operatorname{dom}\alpha\). Suppose \(\nu\) is not positive. Then there exists \(A\in\mathcal F\) with \(\nu(A)<0\). Let \[ X:=-nA,\qquad Y:=0. \] Then \(X\le Y\), so monotonicity gives \[ \rho(X)\le \rho(0). \] But \[ \rho(X)\ge \langle X,\nu\rangle-\alpha(\nu) =-n\nu(A)-\alpha(\nu)\to+\infty, \] since \(\nu(A)<0\), a contradiction. Thus \(\nu\) is positive.

For (3), \[ \rho(0)=\sup_{\nu\in ba}\, -\alpha(\nu) =-\inf_{\nu\in ba}\alpha(\nu). \] Therefore \(\rho(0)=0\) if and only if \(\inf\alpha=0\).

Finally, for (4), let \(\nu\in\operatorname{dom}\alpha\), and suppose \(\nu\not\ll \mathsf P\). Then there exists \(A\in\mathscr F\) with \[ \mathsf P(A)=0,\qquad \nu(A)\ne 0. \] In \(L^\infty\) we have \(A=0\), so for every \(X\in L^\infty\) and every \(n\in\mathbb N\), \[ X+n1_A=X \qquad\text{in }L^\infty. \] Hence \(\rho(X+nA)=\rho(X)\). But, then the representation gives \[ \rho(X) = \rho(X+nA)\ge \langle X,\nu\rangle+n\nu(A)-\alpha(\nu). \] Replacing \(A\) by a subset if necessary, we may assume \(\nu(A)>0\), and then the right-hand side tends to \(+\infty\) as \(n\to\infty\). This contradicts finiteness of \(\rho(X)\) for some \(X\). Therefore every \(\nu\) in \(\operatorname{dom}\alpha\) must satisfy \(\nu\ll P\).

Remark. There is obviously some chicken-and-egg going on here: do we define \(\rho\) first or the penalty? There is another route to the penalty via the acceptance set (see also Section 6.2). If \(\rho\) is a monetary risk measure, its acceptance set is defined as \[ \mathcal A_\rho := \{X\in L^\infty : \rho(X)\le 0\}. \] When \(\rho\) is convex, \(\mathcal A_\rho\) is convex, and one may define the associated penalty function by \[ \alpha_{\mathcal A}(Q):=\sup_{X\in \mathcal A_\rho} Q(X). \] This is a canonical, or minimal, penalty: if \[ \rho(X)=\sup_Q \{Q(X)-\alpha(Q)\}, \] then necessarily \[ \alpha_{\mathcal A}(Q)\le \alpha(Q) \] for all \(Q\). Under the usual convex lower semicontinuity assumptions, this minimal penalty coincides with the convex conjugate \(\rho^*\). See

Proposition 6 Let \(\rho:L^\infty\to\mathbb R\) be convex and monetary. Then \(\rho\) has the Fatou property if and only if \(\rho\) is \(\sigma(L^\infty,L^1)\)-lower semicontinuous.

Remark 11. Proposition 6 is a pivotal result because it links two continuity notions formulated in strikingly different languages. The Fatou property is probabilistic: it concerns a sequence \((X_n)\) that converges almost surely to \(X\) and is uniformly bounded in \(L^\infty\), and asks that \(\rho(X)\) not exceed \(\liminf_n \rho(X_n)\). That is the natural notion from the perspective of a risk manager or pricing actuary, because it describes what it means for a sequence of loss positions to settle down state by state, except on a null set, without blowing up in size. Lower semicontinuity, by contrast, is inherently topological: it is defined in terms of closed sub-level sets, or equivalently by behavior under convergence in a specified topology. The two notions do not obviously have anything to do with one another. In particular, almost sure convergence is not itself topological, and in fact cannot be generated by any topology on \(L^\infty\).

The proposition shows that for convex monetary functionals on \(L^\infty\) the natural probability-theory closure condition used in practice is exactly equivalent to lower semicontinuity for the specific dual-pair topology \(\sigma(L^\infty,L^1)\). The uniform \(L^\infty\) bound is crucial in the bridge from one formulation to the other: if \(|X_n| \le M\) and \(X_n \to X\) almost surely, then for every \(Z \in L^1\) we have \(|X_n Z| \le M|Z|\) with \(M|Z| \in L^1\), so Lebesgue’s dominated convergence theorem gives \(\mathsf P(X_n Z) \to \mathsf P(XZ)\). In other words, bounded almost sure convergence implies \(\sigma(L^\infty,L^1)\)-convergence. That implication is the key mechanism behind the equivalence, and it is what allows us to pass from a statement about converging risks to the convex-analytic machinery of Fenchel–Moreau duality.

The proof relies on the following technical lemma, which we prove in Section 8.8.

Lemma 6 Let \(C \subseteq L^\infty\) be convex. Assume that \(C\) is closed under almost sure convergence of uniformly bounded sequences, in the sense that whenever \((X_n)\subseteq C\) satisfies \[ \sup_n \|X_n\|_\infty < \infty \qquad\text{and}\qquad X_n \to X \ \text{a.s.}, \] we have \(X\in C\). Then \(C\) is \(\sigma(L^\infty,L^1)\)-closed.

The lemma isolates the only nontrivial functional-analytic step in the proof of Proposition 6. The Fatou property shows that each sub-level set \[ C_m=\{X\in L^\infty:\rho(X)\le m\} \] is closed under almost sure convergence of uniformly bounded sequences. Since \(C_m\) is also convex, Lemma 6 implies that each \(C_m\) is \(\sigma(L^\infty,L^1)\)-closed, which is exactly the lower semicontinuity of \(\rho\).

Proof. Suppose \(\rho\) has the Fatou property and let \[ C_m:=\set{X\in L^\infty:\rho(X)\le m}. \] Because \(\rho\) is convex, each \(C_m\) is convex. To prove \(\sigma(L^\infty,L^1)\)-lower semicontinuity of \(\rho\), it suffices to show each \(C_m\) is \(\sigma(L^\infty,L^1)\)-closed. To do that, let \((X_\alpha)\) be a net in \(C_m\) with \[ X_\alpha \to X \qquad\text{in }\sigma(L^\infty,L^1). \] A standard closure argument on convex sets in \(L^\infty\) reduces this to showing that \(C_m\) is closed under bounded a.s. convergence. But that is exactly what the Fatou property gives: if \(\sup_n\|X_n\|_\infty<\infty\) and \(X_n\to X\) a.s. with each \(X_n\in C_m\), then \[ \rho(X)\le \liminf_n \rho(X_n)\le m, \] so \(X\in C_m\). Hence each \(C_m\) is \(\sigma(L^\infty,L^1)\)-closed, and therefore \(\rho\) is \(\sigma(L^\infty,L^1)\)-lower semicontinuous.

For the converse, assume \(\rho\) is \(\sigma(L^\infty,L^1)\)-lower semicontinuous. Let \((X_n)\) satisfy \[ \sup_n \|X_n\|_\infty < \infty \qquad\text{and}\qquad X_n \to X \ \text{a.s.} \] We need show that \(\rho(X)\le \liminf_n \rho(X_n)\). Because the sequence is uniformly bounded, say \[ |X_n|\le M \qquad\text{a.s. for all }n, \] and \(X_n\to X\) a.s., dominated convergence implies that for every \(Z\in L^1\), \[ \mathsf P(X_n Z)\to \mathsf P(XZ). \] That is exactly the statement that \[ X_n \to X \qquad\text{in }\sigma(L^\infty,L^1). \] Now lower semicontinuity of \(\rho\) for this topology gives \[ \rho(X)\le \liminf_n \rho(X_n). \] Hence \(\rho\) has the Fatou property.

Remark 12. Combined with Fenchel–Moreau, Proposition 6 yields the dual representation \[ \rho(X)=\sup_{Z\in L^1} \mathsf P(XZ)-\rho^*(Z) \] for every convex monetary risk measure with the Fatou property.

Remark 13. The \(L^1\) representation for a Fatou-continuous convex risk measure is generally a supremum, not a maximum: \[ \rho(X)=\sup_{Z\in L^1} \mathsf P(XZ)-\rho^*(Z). \] If \(\rho(X)=\operatorname{ess\,sup} X\), the supremum is not attained in \(L^1\) when \(\set{X=\operatorname{ess\,sup} X}\) has measure zero.

At the level of the full Banach dual, \((L^\infty)^*=ba\), one works instead with finitely additive dual variables and obtains the broader representation \[ \rho(X)=\max_{\mu\in ba}\ \mu(X)-\rho^*(\mu). \]

Some lower semicontinuity hypothesis is always needed for exact biconjugacy. For the dual pair \((L^\infty,L^1)\), the needed hypothesis is \(\sigma(L^\infty,L^1)\)-lower semicontinuity, which for convex monetary risk measures is equivalent to the Fatou property, as we have seen. For the full Banach dual pair \((L^\infty,ba)\), Fenchel–Moreau requires \(\sigma(L^\infty,ba)\)-lower semicontinuity. For convex functions on a Banach space, however, weak lower semicontinuity with respect to the full dual is equivalent to norm lower semicontinuity. Since a monetary risk measure is Lipschitz in the \(L^\infty\) norm, it is norm continuous, hence norm lower semicontinuous, and so exact biconjugacy over \(ba\) is automatic in the convex monetary setting.

Remark 14. For an arbitrary function, weak-* lower semicontinuity implies norm lower semicontinuity, since the norm topology is stronger. The converse is false in general. For convex functions, however, the converse holds: a convex function on a Banach space is norm lower semicontinuous if and only if it is lower semicontinuous for the weak topology induced by the full dual. Equivalently, its epigraph is norm closed if and only if it is weakly closed. (For convex subsets of a Banach space, norm-closedness is equivalent to closedness for the weak topology induced by the full dual.) Thus for a convex monetary risk measure on \(L^\infty\), Lipschitz continuity gives norm lower semicontinuity, and convexity upgrades this to \(\sigma(L^\infty,ba)\)-lower semicontinuity.

Remark 15. The scenario interpretation connects the convex-duality theory to several familiar strands of insurance and risk-management practice. In internal models and regulatory work, one often studies named or highly specified stresses: catastrophe events, market dislocations, reserve deterioration, operational failures, or multi-factor combinations of these. Lloyd’s Realistic Disaster Scenarios provide a good example of such concrete scenario design. A dual measure \(Q\) plays a similar mathematical role, though usually at a more abstract level: it specifies a re-weighting of the reference model toward adverse states, and the dual representation asks how costly the position looks under that stress.

The same interpretation also links risk measures to the robust and ambiguity-sensitive literature. From that viewpoint, the reference measure \(\mathsf P\) is not trusted completely. Instead, we contemplate a family of plausible alternatives \(\mathsf Q\), and assess risk by guarding against unfavorable members of that family, either equally in the coherent case or with penalties in the convex case. The penalty function records how far one is willing to move away from the baseline model, or how implausible, expensive, or ambiguous a given scenario is judged to be. Thus the dual representation can be read as a mathematical formalization of model uncertainty: risk is not evaluated under one probabilistic view of the world, but under a controlled family of stressed views.

Law invariance changes the flavor of the scenarios. In the general case, \(\mathsf Q\) may encode very specific statewise stresses. In the law-invariant case, the relevant stresses are distributional rather than narrative: they are tied to ranks, quantiles, tail layers, or return periods, rather than to named states of the world. Distortion and spectral risk measures make that especially clear. Their scenarios are not “earthquake in region A plus equity shock in sector B,” but rather stresses attached to adverse percentiles of the loss distribution. That distinction mirrors an important divide in practice between narrative scenarios and return-period or percentile-based capital views.

Taking the sup of a set of convex risk measures is a standard recipe to create a new one; it moves us from left to right in the Atlas. It is justified by Proposition 7.

Proposition 7 Let \((\rho_i)_{i\in I}\) be a family of convex monetary risk measures on \(L^\infty\), and assume that each \(\rho_i\) admits the dual representation \[ \rho_i(X)=\sup_{Z\in\mathcal{D}}\, \mathsf{P}(XZ)-\rho_i^*(Z), \] for some common dual domain \(\mathcal{D}\subseteq L^1\). Assume also that \[ \sup_{i\in I}\rho_i(0)<\infty. \] Define \[ \rho(X):=\sup_{i\in I}\rho_i(X). \] Then \(\rho\) is finite-valued, convex, and monetary, and it admits the dual representation \[ \rho(X)=\sup_{Z\in\mathcal{D}}\, \mathsf{P}(XZ)-\rho^*(Z), \qquad \rho^*(Z)=\inf_{i\in I}\rho_i^*(Z). \]

Proof. Since each \(\rho_i\) is monetary it is monotone. For every \(X\in L^\infty\), \[ \rho_i(X)\le \rho_i(0)+\|X\|_\infty, \] and therefore \[ \rho(X)=\sup_{i\in I}\rho_i(X) \le \sup_{i\in I}\rho_i(0)+\|X\|_\infty<\infty. \] Thus \(\rho\) is finite-valued. Since a supremum of convex functions is convex, and a supremum of monetary functionals is monetary, \(\rho\) is convex and monetary.

Now compute: \[ \begin{aligned} \rho(X) &=\sup_{i\in I}\rho_i(X) \\ &=\sup_{i\in I}\,\sup_{Z\in\mathcal{D}}\, \mathsf{P}(XZ)-\rho_i^*(Z) \\ &=\sup_{Z\in\mathcal{D}}\,\sup_{i\in I}\, \mathsf{P}(XZ)-\rho_i^*(Z) \\ &=\sup_{Z\in\mathcal{D}}\, \mathsf{P}(XZ)-\inf_{i\in I}\,\rho_i^*(Z). \end{aligned} \] Hence \[ \rho^*(Z)=\inf_{i\in I}\rho_i^*(Z), \] as claimed. Note the derivation is elementary; no minimax theorem is involved.

Theorem 2 identifies the exact continuity requirement for \(\rho\) to ensure a representation on countably additive measures and to ensure the sup in the dual representation is achieved.

Theorem 2 Let \(\rho:L^\infty\to\mathbb R\) be a convex monetary risk measure with the Fatou property, and let \[ \alpha_{\min}(\nu):=\sup_{X\in\mathcal A_\rho}\nu(X), \qquad \mathcal A_\rho:=\{X\in L^\infty:\rho(X)\le 0\}, \] denote its minimal penalty on \(ba\). Then the following are equivalent.

- \(\rho\) is continuous from above: if \(X_n\downarrow X\) almost surely, then \[ \rho(X_n)\downarrow \rho(X). \]

- The minimal penalty takes finite values only on countably additive probabilities: \[ \alpha_{\min}(\nu)<\infty \quad\Longrightarrow\quad \nu\in L^1,\ \nu\ge 0,\ \mathsf P\nu=1. \]

- In the countably additive dual representation \[ \rho(X)=\sup_{Z\in L^1}\bigl\{\mathsf P(XZ)-\rho^*(Z)\bigr\}, \] the supremum is attained for every \(X\in L^\infty\).

In the payoff sign convention used by Follmer and Schied, condition (1) is written as continuity from below.

See Section 8.9 for a sketch of the proof.

Remark. The theorem explains the role of continuity from above in the Atlas. The Fatou property is enough to obtain an \(L^1\) representation, but not enough to force attainment: \(\operatorname{ess\,sup}\) is the standard counterexample. Continuity from above is the extra condition that eliminates purely finitely additive dual variables from the minimal penalty and thereby upgrades the countably additive dual supremum to a maximum.

Let \(\rho:L^\infty\to\mathbb R\) be a convex monetary risk measure with the Fatou property. If \(\rho\) is continuous from above, then it is continuous from below.

Proof. By Theorem 2, continuity from above implies that for every \(X\in L^\infty\) the supremum in the countably additive dual representation \[ \rho(X)=\sup_{Z\in L^1}\,\mathsf P(XZ)-\rho^*(Z) \] is attained.

Now let \(X_n\uparrow X\) almost surely. By monotonicity, \[ \rho(X_n)\le \rho(X) \qquad\text{for all }n, \] so \((\rho(X_n))\) is increasing and bounded above by \(\rho(X)\).

Choose \(Z\in L^1\) attaining the dual maximum for \(X\), so \[ \rho(X)=\mathsf P(XZ)-\rho^*(Z). \] Then for every \(n\), \[ \rho(X_n)\ge \mathsf P(X_nZ)-\rho^*(Z). \] Since \(X_n\uparrow X\) almost surely and the sequence is uniformly bounded in \(L^\infty\), dominated convergence gives \[ \mathsf P(X_nZ)\to \mathsf P(XZ). \] Therefore \[ \liminf_{n\to\infty}\rho(X_n)\ge \mathsf P(XZ)-\rho^*(Z)=\rho(X). \] Since \(\rho(X_n)\le \rho(X)\) for all \(n\), we have \[ \limsup_{n\to\infty}\rho(X_n)\le \rho(X)\le \liminf_{n\to\infty}\rho(X_n)\le \limsup_{n\to\infty}\rho(X_n). \] Hence \[ \lim_{n\to\infty}\rho(X_n)=\rho(X). \]

3.3 The Coherent Case

Now assume \(\rho\) is coherent, that is, monetary, subadditive, and positively homogeneous.

Proposition 8 Let \(\rho:L^\infty\to\mathbb R\) be coherent with the Fatou property. Then \(\rho^*\) is the convex indicator function of a \(\sigma(L^1,L^\infty)\)-closed convex set \[ \ M\subseteq \set{Z \in L^1 : Z \ge 0,\ \mathsf PZ=1} \] that is, \[ \rho^*(Z)= \begin{cases} 0, & Z\in \mathcal M,\\ +\infty, & Z\notin \mathcal M. \end{cases} \] Consequently, \[ \rho(X)=\sup_{Z\in \mathcal M}\mathsf P(XZ). \]

Proof. By Proposition 6 \(\rho\) is \(\sigma(L^\infty,L^1)\)-lower semicontinuous. Hence Fenchel–Moreau Theorem 1 applies and yields \[ \rho(X)=\sup_{Z\in L^1}\{\mathsf P(XZ)-\rho^*(Z)\}, \] where \[ \rho^*(Z)=\sup_{X\in L^\infty}\{\mathsf P(XZ)-\rho(X)\}. \] We now show that \(\rho^*\) is the indicator of a closed convex set. Fix \(Z\in L^1\). If there exists \(X_0\) such that \(\mathsf P(X_0Z)-\rho(X_0)>0\), then for every \(\lambda>0\), \[ \mathsf P((\lambda X_0)Z)-\rho(\lambda X_0) = \lambda\bigl(\mathsf P(X_0Z)-\rho(X_0)\bigr)\to\infty. \] Hence \(\rho^*(Z)=+\infty\). On the other hand, if \(\mathsf P(XZ)-\rho(X)\le 0\) for all \(X\in L^\infty\), then taking \(X=0\) gives \(\rho^*(Z)\ge 0\), while the definition of \(\rho^*\) implies \(\rho^*(Z)\le 0\), and therefore \(\rho^*(Z)=0\). Together, these arguments show \(\rho^*(Z)\in\set{0,+\infty}\), and \[ \begin{aligned} \mathcal M :&=\set{Z\in L^1:\rho^*(Z)=0} \\ &= \set{Z\in L^1:\mathsf P(XZ)\le \rho(X)\ \forall X\in L^\infty}. \end{aligned} \] Since \(\rho^*\) is convex and \(\sigma(L^1,L^\infty)\)-lower semicontinuous, the set \(\mathcal M\) is convex and \(\sigma(L^1,L^\infty)\)-closed. Thus \(\rho^*\) is the convex indicator of \(\mathcal M\).

Substituting into the biconjugate formula gives \[ \rho(X) = \sup_{Z\in L^1} \mathsf P(XZ)-\rho^*(Z) = \sup_{Z\in \mathcal M}\mathsf P(XZ). \] Finally, by Proposition 5 we know \(\mathcal M\) is a closed convex set of non-negative densities.

Remark 16. A coherent risk measure is exactly the support function of a closed convex set of dual elements. The convex case allows a nontrivial penalty \(\rho^*\); positive homogeneity collapses that penalty to an indicator.

The set \(\mathcal M\) can be identified as \(\partial\rho(0)\) when \(\rho\) is normalized.

Corollary 2 Let \(\rho:L^\infty\to\mathbb R\) be coherent, normalized, and have the Fatou property. Then \[ \partial \rho(0) = \set{Z\in L^1:\rho^*(Z)=0 }. \]

Proof. For any proper convex function with \(\rho(0)=0\), \[ Z\in \partial \rho(0) \iff \rho(Y)\ge \rho(0)+\mathsf P(ZY) \ \text{for all }Y \iff \mathsf P(ZY)-\rho(Y)\le 0 \ \text{for all }Y. \] Taking the supremum over \(Y\) gives \[ Z\in \partial \rho(0) \iff \rho^*(Z)\le 0. \] But always \(\rho^*(Z)\ge -\rho(0)=0\), by testing at \(Y=0\). Hence \[ Z\in \partial \rho(0). \iff \rho^*(Z)=0. \]

3.4 Dual Geometry, Extreme Points, and Law-Invariant Orbits

Assume that \(\rho\) is a convex monetary risk measure on \(L^\infty\) with the Fatou property. Then \(\rho\) admits the countably additive dual representation \[ \rho(X)=\sup_{Z\in L^1}\, \mathsf P(XZ)-\rho^*(Z), \] where \[ \rho^*(Z)=\sup_{X\in L^\infty}\, \mathsf P(XZ)-\rho(X). \] By Proposition 5, the effective domain \[ D_1:=\operatorname{dom}\rho^* =\set{Z\in L^1: Z\ge 0,\ \mathsf PZ=1,\ \rho^*(Z)<\infty} \] consists of probability densities. In this section we relate properties of \(\rho\) to the geometry of its dual objects.

In the coherent case, \(\rho^*\) takes only the values \(0\) and \(\infty\), so it is the indicator of a closed convex set \[ D\subseteq \set{Z\in L^1: Z\ge 0,\ \mathsf PZ=1}, \] and the representation becomes \[ \rho(X)=\sup_{Z\in D}\mathsf P(ZX). \] Thus the natural dual object for a coherent risk measure is a closed convex set of probability densities. Convexity of \(D\) follows because \(D=\set{Z:\rho^*(Z)=0}\) and \(\rho^*\) is convex.

In the convex, non-coherent case, the domain alone is no longer enough: we must retain the penalty values as well. The natural dual object is then the epigraph \[ D:=\operatorname{epi}(\rho^*) =\set{(Z,t)\in L^1\times \mathbb R:\rho^*(Z)\le t}, \] and the dual representation may be written \[ \rho(X)=\sup_{(Z,t)\in D} \, \mathsf P(ZX)-t. \] Coherence is therefore described by a set of scenarios, whereas convexity is described by a set of scenarios together with a cost attached to each one.

Suppose now that the relevant dual object is compact in the appropriate topology. In the coherent case, this means compactness of \(D\) in \(\sigma(L^1,L^\infty)\). Then Krein–Milman gives \[ D=\overline{\operatorname{co}}(\operatorname{ext}(D)), \] the closed convex hull of \(D\). For fixed \(X\), the map \[ Z\mapsto \mathsf P(ZX) \] is affine and \(\sigma(L^1,L^\infty)\)-continuous. Hence, if the supremum is attained, Bauer’s maximum principle shows that it is attained at an extreme point. In that case \[ \rho(X)=\max_{Z\in \operatorname{ext}(D)} \mathsf P(ZX). \] Thus the extreme points are the active dual scenarios. See Aliprantis and Border (2006) for Bauer and Krein-Milman.

The same idea extends to the convex case. For fixed \(X\), the map \[ (Z,t)\mapsto \mathsf P(ZX)-t \] is affine and continuous on \(L^1\times\mathbb R\) for the product topology \(\sigma(L^1,L^\infty)\times\) the usual topology on \(\mathbb R\). Hence, whenever the relevant maximizing slice of \(\operatorname{epi}(\rho^*)\) is compact and the supremum is attained, Bauer’s principle again shows that the maximum occurs at an extreme point of the epigraph. So the convex case has the same geometric flavor, except that the primitive object is now the epigraph of the penalty rather than the dual domain alone.

Compactness does not come for free. For example, if \(\rho(X)=\operatorname{ess\,sup}X\), then \[ \rho(X)=\sup_{Z\in D}\mathsf P(ZX), \qquad D=\set{Z\in L^1: Z\ge 0,\ \mathsf PZ=1}, \] but \(D\) is not \(\sigma(L^1,L^\infty)\)-compact. Correspondingly, the supremum need not be attained unless \(X\) reaches its essential supremum on a set of positive probability.

A second organizing principle concerns law invariance and the symmetry it imposes on the dual geometry. On an atomless space, law invariance says that \(\rho\) depends only on the distribution of \(X\), not on the labels of states. In particular, law invariance implies \[ \rho(X\circ T)=\rho(X) \] for every measure-preserving transformation \(T:\Omega\to\Omega\).

The corresponding symmetry on the dual side is most naturally expressed in terms of measures rather than densities. Let \(\mathsf Q\ll \mathsf P\) be a probability measure with Radon–Nikodym derivative \[ Z=\frac{d\mathsf Q}{d\mathsf P}. \] For a measure-preserving transformation \(T\), define the push-forward measure in the usual way: \[ T_\#\mathsf Q(A):=\mathsf Q(T^{-1}(A)). \] Then \[ \langle X\circ T,\mathsf Q\rangle=\langle X,T_\#\mathsf Q\rangle. \] If \(T\) is invertible and measure preserving, then \(T_\#\mathsf Q\) has density \(Z\circ T^{-1}\) with respect to \(\mathsf P\). Thus the density picture may be viewed as a rearrangement of \(Z\), but the measure formulation is more general.

The next proposition makes the link between primal and dual symmetry explicit.

Proposition 9 Assume the underlying probability space is atomless, and let \(\rho:L^\infty\to\mathbb R\) be a convex monetary risk measure with the Fatou property. Then \(\rho\) is law invariant if and only if \(\rho^*\) is law invariant, in the sense that \[ \frac{d\mathsf Q_1}{d\mathsf P}\stackrel{d}{=}\frac{d\mathsf Q_2}{d\mathsf P} \quad\Longrightarrow\quad \rho^*(\mathsf Q_1)=\rho^*(\mathsf Q_2). \]

Proof. We identify \(\mathsf Q\ll\mathsf P\) with its density \(Z=d\mathsf Q/d\mathsf P\).

Assume first that \(\rho\) is law invariant, and let \(Z,Z'\in L^1\) satisfy \(Z\stackrel{d}{=} Z'\). To show \(\rho^*(Z)=\rho^*(Z')\), fix \(X\in L^\infty\). Since the space is atomless, any coupling of \(X\) and \(Z\) may be realized with \(Z'\) in place of \(Z\): there exists \(\hat X\in L^\infty\) such that \[ (\hat X,Z')\stackrel{d}{=}(X,Z). \] Therefore \[ \mathsf P(\hat X Z')=\mathsf P(XZ). \] Also \(\hat X\stackrel{d}{=}X\), so law invariance gives \(\rho(\hat X)=\rho(X)\). Hence \[ \mathsf P(XZ)-\rho(X)=\mathsf P(\hat X Z')-\rho(\hat X)\le \rho^*(Z'). \] Taking the supremum over \(X\) yields \(\rho^*(Z)\le \rho^*(Z')\), and symmetry gives equality.

Conversely, assume \(\rho^*\) is law invariant. Let \(X,X'\in L^\infty\) satisfy \(X\stackrel{d}{=}X'\). Fix \(Z\in L^1\). Since the space is atomless and \(X\stackrel{d}{=}X'\), there exists \(Z'\in L^1\) such that \[ (X',Z')\stackrel{d}{=}(X,Z). \] Then \[ \mathsf P(X'Z')=\mathsf P(XZ), \] and \(Z'\stackrel{d}{=}Z\), so by law invariance of \(\rho^*\), \[ \rho^*(Z')=\rho^*(Z). \] Therefore \[ \mathsf P(XZ)-\rho^*(Z)=\mathsf P(X'Z')-\rho^*(Z')\le \rho(X'). \] Taking the supremum over \(Z\) gives \(\rho(X)\le \rho(X')\). By symmetry, \(\rho(X')\le \rho(X)\), and therefore \(\rho(X)=\rho(X')\).

This proposition lets us describe law-invariant dual geometry in terms of orbits under measure-preserving rearrangements. For a dual probability measure \(\mathsf Q\ll\mathsf P\), define its orbit by \[ [\mathsf Q]:=\set{T_\#\mathsf Q:T\text{ measure preserving}}. \] At the density level, these correspond to rearrangements of \(Z=d\mathsf Q/d\mathsf P\). A law-invariant coherent dual set is built from the closed convex hull of such orbits; in the convex case, the same applies to the epigraph, with the penalty constant along each orbit.

This viewpoint leads to a useful informal picture. A single dual measure gives a linear expectation. One orbit, together with its law-invariant closed convex hull, gives the spectral/comonotonic class. Several distinct orbits are needed for law-invariant coherent examples that are not comonotonic additive. Finally, adding a nontrivial penalty on top of the dual geometry produces convex, non-coherent examples. This is the geometric intuition behind Kusuoka-type representations and their refinements. These ideas are developed in Shapiro (2012).

Figure 3 provides a schematic of the geometry for the dual representations of monetary risk measures. It highlights the interplay between their algebraic structure and the shape of their penalty function epigraphs. The horizontal axis represents decreasing restrictions on the measure’s algebraic properties: moving from left to right, the structure is systematically relaxed from purely linear (additive), to comonotonic additive, to sub-additive (coherent), and finally to general convex. This algebraic relaxation dictates a corresponding decrease in restrictions (increase in flexibility) on the dual set, which expands from a single fixed point (linear), to a structured capacity core (comonotonic), to an arbitrary flat base domain (coherent), and ultimately into a volumetric epigraph permitting arbitrary penalty heights (convex). The vertical axis illustrates the additional symmetry imposed by law invariance. The top row depicts generic, asymmetric dual sets, and the bottom demonstrates how law invariance forces these spaces into symmetric configurations with respect to the reference measure \(\mathsf P\). By Ryff-type rearrangement results, this symmetrization is represented by passing from individual dual elements to the closed convex hull of their law-invariant orbits under measure-preserving transformations. Notably, this visualizes how symmetrizing the rigid polytope of a general comonotonic capacity restricts it to a distortion capacity, generating the characteristic symmetric orbit of a spectral risk measure.

Finally, Figure 4 is a schematic of the topological structure of \(ba=(L^\infty)^*\), the Banach dual of \(L^\infty\), consisting of bounded finitely additive measures. The outer grey boundary represents all of \(ba\). Nestled within it is the white region, \(ba_{1,+}\), representing the convex, weak* compact subset of normalized, positive finitely additive probability measures which is the generalized dual domain for coherent and convex risk measures defined on \(L^\infty\).

The defining visual feature of the spaces is the stylized \(H\)-tree mesh—cyan in the general vector space (\(L^1\)) and green in the positive probability cone (\(L^1_{1,+}\)). This mesh represents the subspace of countably additive measures, which can be identified with standard probability densities via the Radon-Nikodym theorem. The skeletal nature is deliberate: it visualizes Goldstine’s Theorem. By Goldstine, the unit ball of \(L^1\) is weak* dense in the unit ball of \(ba\); therefore, the mesh must reach into every neighborhood of the larger space. However, because \(L^1\) is a proper subspace with an empty interior in the norm topology of \(ba\), it cannot be drawn as a solid volume. It is everywhere in the weak* sense, yet volumetrically nowhere in the norm sense, leaving infinite porous gaps (the white and grey voids) where non-countably additive measures to reside.

The magnified call out to the right illustrates the mechanics of the Yosida-Hewitt decomposition and the counter-intuitive reality of sequences in the weak* topology. The thick green branches represent the local fibers of the \(L^1_{1,+}\) mesh. A sequence of perfectly well-behaved, countably additive density functions \((Z_n)\) exists strictly on these fibers. The sequence converges in the weak* topology—meaning \(\int X Z_n dP\) converges for every bounded payoff \(X \in L^\infty\). However, because \(L^1\) is not weak* closed, the limit of this sequence is not guaranteed to remain a standard density. The red dashed path tracks the sequence as its limit jumps the tracks off the countably additive fibers and into the white void, converging to the red point \(\mu_{\mathit{pfa}}\), a purely finitely additive measure. These objects carry no standard density and assign zero probability to any countably generated event, instead concentrating their mass on sequences of sets whose standard probabilities vanish. In the context of risk measurement, these generalized measures act analogously to Dirac deltas at the essential supremum of a random variable. The diagram highlights why restricting dual representations to standard \(L^1\) densities is insufficient: sequences of worst-case scenarios (e.g., for ess sup) inevitably push the defining risk weights off the \(L^1\) mesh and into \(ba\).

4 Four Deeper Results

4.1 Law Invariant Risk Measures Preserve Second Order Stochastic Dominance

Definition 5 For \(X,Y \in L^\infty\):

\(X \preceq_{cx} Y\) means that \(X\) is dominated by \(Y\) in convex order, that is, \[ \mathsf P(\phi(X)) \le \mathsf P(\phi(Y)) \qquad \text{for every convex }\phi:\mathbb R\to\mathbb R \] whenever the expectations exist. On \(L^\infty\) they always exist for bounded convex \(\phi\), and this is equivalent to the usual formulation with all convex \(\phi\).

\(X \preceq_{ssd} Y\) means that \(X\) is dominated by \(Y\) in second-order stochastic dominance, equivalently increasing convex order for losses, if \[ \mathsf P(\phi(X)) \le \mathsf P(\phi(Y)) \qquad \text{for every increasing convex }\phi:\mathbb R\to\mathbb R. \] Thus \(Y\) is the riskier loss.

Remark 17. In the definition of convex order we can take \(\phi(x)=x\) and \(\phi(x)=-x\) to deduce that \(X \preceq_{cx} Y\) implies \(X\) and \(Y\) have the same means. As a result, if \(\mathsf P X=\mathsf P Y\), then \[ X \preceq_{ssd} Y \iff X \preceq_{cx} Y. \]

On an atomless probability space, convex order admits two standard characterizations. Ryff’s theorem says that \(X \preceq_{cx} Y\) iff \(X\) belongs to the closed convex hull of the equidistribution class of \(Y\) Ryff (1967). Strassen’s theorem says that \(X \preceq_{ssd} Y\) iff one can couple versions \((\tilde X,\tilde Y)\) so that \(\tilde X \le \mathsf P(\tilde Y \mid \tilde X)\) a.s. In the equal-mean case this condition becomes the martingale characterization of convex order, \(\tilde X=\mathsf P(\tilde Y\mid \tilde X)\) a.s. Strassen (1965).

Theorem 3 Assume the underlying probability space is standard and atomless. Let \[ \rho:L^\infty \to \mathbb R \] be a monetary convex risk measure. Then \(\rho\) is law invariant if and only if it respects second-order stochastic dominance: \[ X \preceq_{ssd} Y \implies \rho(X)\le \rho(Y). \]

This result is the risk-measure version of Svindland’s characterization of convex lower-semicontinuous functionals by law invariance and convex-order monotonicity Svindland (2014). Since a monetary risk measure on \(L^\infty\) is Lipschitz, lower semicontinuity is automatic, so his theorem applies directly.

Remark 18. It is a consequence of Theorem 3 that law invariant risk measures have the positive loading property: \(\rho(X)\ge \mathsf P(X)\) for all \(X\), because expectations reduce risk and so \(\mathsf P(X) \preceq_{ssd} X\). This remark justifies the implication to a positive loading in the lower left corner of Figure 2.

Proof. Because \(\rho\) is monetary, it is \(L^\infty\)-Lipschitz and hence norm continuous.

First assume \(\rho\) is law invariant. We show it respects SSD. Suppose first that \(X \preceq_{cx} Y\). By Ryff’s theorem, \(X\) lies in the closed convex hull of the orbit \[ M(Y):=\set{Z\in L^\infty: Z \stackrel{d}= Y}. \] Since \(\rho\) is law invariant, it is constant on \(M(Y)\): \(\rho(Z)=\rho(Y)\) for all \(Z\in M(Y)\). By convexity, the same upper bound holds on the convex hull: \[ \rho\!\left(\sum_{i=1}^n \lambda_i Z_i\right) \le \sum_{i=1}^n \lambda_i \rho(Z_i) = \rho(Y). \] By continuity, the inequality extends to the closure. Hence \[ X \preceq_{cx} Y \implies \rho(X)\le \rho(Y). \]

Now suppose \(X \preceq_{ssd} Y\). By Strassen’s theorem, there exist copies \((\tilde X,\tilde Y)\) on a common probability space such that \(\tilde X \le \mathsf P(\tilde Y\mid \tilde X)\) a.s. By monotonicity \(\rho(X)=\rho(\tilde X)\le \rho(\mathsf P(\tilde Y\mid \tilde X))\).

We next claim that \(\mathsf P(\tilde Y\mid \tilde X) \preceq_{cx} \tilde Y\). To see this, let \(\phi:\mathbb R\to\mathbb R\) be any convex function. Jensen’s inequality gives \(\phi(\mathsf P(\tilde Y\mid \tilde X)) \le \mathsf P(\phi(\tilde Y)\mid \tilde X)\) a.s. Taking expectations, \(\mathsf P\phi(\mathsf P(\tilde Y\mid \tilde X)) \le \mathsf P\phi(\tilde Y)\). Since this holds for every convex \(\phi\), we obtain \(\mathsf P(\tilde Y\mid \tilde X) \preceq_{cx} \tilde Y\).

By the first step of the proof, convex order dominance implies \(\rho(\mathsf P(\tilde Y\mid \tilde X))\le \rho(\tilde Y)=\rho(Y)\). Combining the two inequalities yields \(\rho(X)\le \rho(Y)\). Therefore \(\rho\) respects second-order stochastic dominance.

Conversely, if \(\rho\) respects SSD and \(X \stackrel{d}= Y\), then both \(X \preceq_{ssd} Y\) and \(Y \preceq_{ssd} X\) hold trivially, since the two variables have the same law. Hence \(\rho(X)\le \rho(Y)\) and \(\rho(Y)\le \rho(X)\), so \(\rho(X)=\rho(Y)\). Thus \(\rho\) is law invariant.

4.2 Schmeidler’s Characterization of Comonotonic Additive Functionals

This section outlines the theory developed in Schmeidler (1986). The corresponding economic ideas are expounded in Schmeidler (1989).

Definition 6 A capacity on \((\Omega,\mathscr F)\) is a set function \[ \nu:\mathscr F \to [0,1] \] such that \[ \nu(\varnothing)=0, \qquad \nu(\Omega)=1, \] and \[ A\subseteq B \implies \nu(A)\le \nu(B). \]

Thus a capacity is a normalized monotone set function; additivity is not assumed. If \[ \nu(A)=\mathsf P(A) \] for all \(A\in\mathscr F\), then \(\nu\) is an ordinary probability measure. More generally, if \[ \nu(A)=g(\mathsf P(A)) \] for some distortion \(g:[0,1]\to[0,1]\), then \(\nu\) is a distorted probability capacity.

Remark 19. The terminology capacity, non-additive probability, and fuzzy measure are often used for closely related notions. Schmeidler’s theorem relies on monotonicity and normalization, not additivity.

Definition 7 Let \(\nu\) be a capacity, and let \(X\in L^\infty\) be nonnegative. The Choquet integral of \(X\) with respect to \(\nu\) is \[ \int X\,d\nu := \int_0^\infty \nu\set{X\ge t}\,dt. \] Since \(X\in L^\infty\), the integral is finite, and one may equally write \[ \int X\,d\nu = \int_0^{\|X\|_\infty}\nu\set{X\ge t}\,dt. \] For a general bounded random variable \(X\in L^\infty\), the Choquet integral is defined by \[ \int X\,d\nu := \int_0^\infty \nu\set{X\ge t}\,dt + \int_{-\infty}^0 \bigl(\nu\set{X\ge t}-1\bigr)\,dt. \]

Equivalently, writing \(M:=\|X\|_\infty\), \[ \int X\,d\nu = \int_0^M \nu\set{X\ge t}\,dt + \int_{-M}^0 \bigl(\nu\set{X\ge t}-1\bigr)\,dt. \] This is the usual bounded-variable form of the Choquet integral.

Remark 20. Given a capacity \(\nu\), define its dual capacity by \[ \check\nu(A):=1-\nu(A^c). \] It is easy to check \(\check \nu\) is a capacity, and that the notation is consistent with the dual of a distortion when \(\nu(A)=g(\mathsf P(A))\) for a distortion function \(g\). Using the dual capacity, for bounded \(X\), we can write the Choquet integral in a pleasantly symmetric form: \[ \int X\,d\nu = -\int_{-\infty}^0 \check\nu\{X\le x\}\,dx + \int_0^\infty \nu\{X>x\}\,dx. \] Equivalently, if \(X=X^+- X^-\) is the difference of two positive functions, \[ \int X\,d\nu = -\int X^-\,d\check\nu + \int X^+\,d\nu. \] Thus the negative part of the Choquet integral is naturally governed by the dual capacity, exactly paralleling the use of the dual distortion in distortion risk theory.

Remark 21. If \(\nu\) is sigma additive, that is, a probability measure, then the Choquet integral reduces to the ordinary Lebesgue integral: \[ \int X\,d\nu = \nu(X). \] Thus the Choquet integral extends expectation from additive to non-additive set functions.

Theorem 4 (Schmeidler’s representation theorem) Let \((\Omega, \mathscr F, \mathsf P)\) be a probability space and \[ \rho:L^\infty\to\mathbb R \] be a monetary, normalized, and comonotonic additive functional. Then there exists a unique capacity \[ \nu:\mathscr F\to[0,1] \] such that \[ \rho(X)=\int X\,d\nu \qquad\text{for all }X\in L^\infty. \]

Conversely, for every capacity \(\nu\), the Choquet integral \[ X\mapsto \int X\,d\nu \] is monetary, normalized, comonotonic additive, and sup-norm continuous.

Remark 22. In the monetary setting, the earlier basic results show that comonotonic additivity plus monetary already force positive homogeneity, so the theorem identifies the entire comonotonic branch with Choquet integration against capacities. The law-invariant sub-branch corresponds to capacities of the special form \[ \nu(A)=g(\mathsf P(A)). \]

Remark 23. The Choquet integral is continuous in the sup norm by Proposition 1.

Proof. The first step is to recover a candidate capacity from indicators. To that end, define \[ \nu(A):=\rho(1_A)=\rho(A), \qquad A\in\mathscr F, \] where here and going-forward we identify a set with its indicator function. Monetary (monotone and cash invariant) and normalized imply \(\nu(\varnothing)=0\), \(\nu(\Omega)=\rho(1)\), and \(A\subseteq B \implies \nu(A)\le \nu(B)\). After normalizing constants, this gives a capacity candidate. Uniqueness is immediate, because any representing capacity must satisfy \[ \nu(A)=\int A\,d\nu=\rho(A). \]

The second step is to prove the formula for nested simple functions. Consider a simple function written in decreasing chain form \[ X=\sum_{k=1}^n a_k {A_k}, \qquad a_k\ge 0, \qquad A_1\supseteq A_2\supseteq \cdots \supseteq A_n. \] The summands are pairwise comonotone because the sets are nested (the pairwise values of indicators on nested sets are \((0,0)\), \((1,0)\) and \((1,1)\)). Hence comonotonic additivity gives \[ \rho(X)=\sum_{k=1}^n a_k \rho({A_k}) =\sum_{k=1}^n a_k \nu(A_k). \] But this is exactly the Choquet integral of such a nested simple function. Equivalently, if one writes \[ X=\sum_{k=1}^n (x_k-x_{k-1})\set{X\ge x_k} \] with \[ 0=x_0\le x_1\le \cdots\le x_n, \] then \[ \rho(X)=\sum_{k=1}^n (x_k-x_{k-1})\nu\set{X\ge x_k} = \int X\,d\nu. \]

The third step extends from nonnegative simple functions to bounded nonnegative \(X\). Approximate \(X\ge 0\) uniformly by nested simple functions \(X_n\) built from its level sets. Since \(\rho\) is sup-norm continuous, \[ \rho(X_n)\to \rho(X). \] The Choquet integral is also sup-norm continuous on bounded functions, and for each \(n\) we already know \[ \rho(X_n)=\int X_n\,d\nu. \] Passing to the limit yields \[ \rho(X)=\int X\,d\nu \qquad\text{for all }X\ge 0. \]

Finally, extend to general bounded \(X\), use the standard signed Choquet formula. This gives the representation for all bounded \(X\) and proves existence and uniqueness.

The converse direction is easier. For any capacity \(\nu\): Monotonicity of \(X\mapsto \int X\,d\nu\) follows from monotonicity of the level sets. Comonotonic additivity is immediate on nested simple functions and then extends by uniform approximation. And sup-norm continuity follows because changing \(X\) by at most \(\varepsilon\) shifts all level sets by at most \(\varepsilon\).

Remark 24. The theorem involves several key assumptions and ideas working together. Comonotonicity lets us decompose a function along its own nested level sets. Nested indicators play the role that disjoint indicators play for ordinary integration. The values of the functional on indicators define the representing set function. Comonotonic additivity then forces the nested-simple-function formula, which is exactly the Choquet integral. The characterization of SRMs uses the same flow.

4.3 Law Invariance Implies Fatou

Theorem 5 (Jouini, Schachermayer, and Touzi (2006), Svindland (2010)) Let \((\Omega,\mathscr F,\mathsf P)\) be a nonatomic probability space, and let \[ \rho:L^\infty \to \mathbb R \] be a finite-valued, law invariant, convex, monetary risk measure. Then \(\rho\) has the Fatou property.

Remark 25. Recall that by Proposition 6, the Fatou property is equivalent to lower semicontinuity for the \(\sigma(L^\infty,L^1)\) topology for convex monetary risk measures on \(L^\infty\).

Remark 26. The functional \(\rho\) is quasiconvex if \[ \rho(\lambda x+(1-\lambda)y)\le \max\{\rho(x),\rho(y)\}, \qquad 0\le \lambda\le 1, \] or, equivalently, if every sublevel set \[ \set{x:\rho(x)\le m} \] is convex. Every convex functional is quasiconvex, but not conversely. For example, any monotone function is quasiconvex because its sub-level sets are intervals or rays but most are not convex. Quasiconvexity expresses a weak preference for diversification: mixing two positions never produces risk larger than the worse of the two endpoints.

Svindland’s argument is really about convex law-invariant sublevel sets, so quasiconvexity is enough. He shows that every law-invariant norm-lower-semicontinuous quasiconvex function on \(L^\infty\) is lower semicontinuous for every \(\sigma(L^\infty,L^q)\) topology, \(1\le q<\infty\).

A set \(C\subseteq L^\infty\) is law invariant if \(X\in C\) and \(Y\stackrel{d}=X\), then \(Y\in C\). The proof of the theorem relies on the next proposition, which in turn relies on the lemma.

Proposition 10 Let \(C\subseteq L^\infty\) be convex, law invariant, and closed for the norm topology of \(L^\infty\). Then \(C\) is closed for every \(\sigma(L^\infty,L^q)\) topology, \(1\le q<\infty\).

Lemma 7 If \(C\subseteq L^\infty\) is convex, law invariant, and norm closed, then for every sub-\(\sigma\)-algebra \(\mathscr A\subseteq\mathscr F\) and every \(X\in C\), we have \(\mathsf P(X\mid\mathscr A)\in C\).

Proof. Here is a sketch of Svindland’s proof.

Step 1: constants. First prove that \[ \mathsf P X \in C \] for every \(X\in C\). Take \(\varepsilon>0\). Write \(q_X\) for the quantile function of \(X\). Partition \((0,1)\) into \(n\) equal intervals, with \(n\) large enough that the oscillation of \(q_X\) on each interval is at most \(\varepsilon\). Partition \(\Omega\) into sets \(B_1,\dots,B_n\) of probability \(1/n\). On each \(B_k\), build a random variable uniformly distributed on one of the quantile intervals; then permute these quantile pieces over the blocks \(B_k\). Each such rearrangement has the same law as \(X\), hence lies in \(C\) by law invariance. Averaging over all permutations gives a random variable \(X_n\in C\) by convexity, and by construction \(X_n\) is uniformly within \(\varepsilon\) of the constant \(\mathsf P X\). Since \(C\) is norm closed, \(\mathsf P X\in C\).