I recently created a webpage to factor integers using open-source tools. I remember a BASIC program to factor integers was one of my first programs. It was cool to learn that 1001 = 7 x 11 x 13. Experimenting with modern factoring routines blew my mind, so fast for such large numbers. But then math-me asked: is it actually that amazing? How hard is a random integer to factor, as opposed to an RSA product of two equal sized primes? How many factors do you expect? What sizes? A bit of exploration convinced me that modern factoring methods are actually more amazing than I initially thought. There’s virtually no trial division. But a central place for the birthday collision problem and modular arithmetic. There is much magic, involving lots of cool number theory, including my friends Elliptic Curves—subject of my PhD thesis. This LLM-fueled post describes some of the magic and how the open-source program YAFU (Yet Another Factoring Utility) weaves together different tools to deliver spectacular results.

TL;DR

Starting from the assumption that prime divisibility for \(p \le B\) consists of independent events, the probability that a random \(n\)-digit number \(X \gg B\) has a prime factor \(\le B\) is about \(1/p\). Therefore, the probability is has no prime factor\(\le B\) (i.e., it is “\(B\)-rough”) is \[

\Pr(P^-(X) > B) \approx \prod_{p \le B} \left( 1 - \frac{1}{p} \right).

\] Applying Mertens’ Third Theorem, as \(B \to \infty\), \[

\prod_{p \le B} \left( 1 - \frac{1}{p} \right) \approx \frac{e^{-\gamma}}{\ln B}.

\] Substituting \(B = 10^k\)\[

\frac{e^{-\gamma}}{\ln(10^k)} = \frac{e^{-\gamma}}{k \ln(10)}.

\] Using the numerical constants \(e^{-\gamma} \approx 0.561459\) and \(\ln(10) \approx 2.302585\) yields \[

\frac{0.561459}{2.302585 \cdot k} \approx \frac{0.2438}{k} \approx \frac{0.25}{k}

\] and the probability there is a factor with \(\le k\)-digits is roughly \[

1 - \frac{1}{4k}.

\] Thus we get Table 1 for splitting difficulty. It includes the Merten’s approximation, a more exact calculation using the Buchstab \(\omega\)-function and the simple \(1/4\)-digits rule. Roughly, YAFU uses trial division for \(k \le 4\) (94% hit rate), followed by iterations of Fermat squares, and then Pollard’s rho for \(5 \le k \le 15\) (98.4% cumulative). Beyond this, Elliptic Curve Methods (ECM) hunt for factors up to \(k \approx 35\) (99.3% hit rate), typically requiring a few minutes. If the number remains unsplit, we are left with the “Balanced Case” (\(k > 35\)), where YAFU invokes the Self-Initializing Quadratic Sieve (SIQS). For a 100-digit number, SIQS is the terminal stage—usually finishing in tens of minutes—as it is significantly more efficient than the General Number Field Sieve (GNFS) until the target exceeds approximately 120 digits.

Code

from greater_tables import GTimport numpy as npimport pandas as pdfrom scipy.interpolate import interp1ddef compute_comparison_table(total_digits=100, max_k=50):""" Computes splitting probabilities comparing the exact Buchstab function against the Mertens' Theorem approximation. """# --- 1. Buchstab omega(u) Calculation --- u_max = total_digits /1.0 step =0.01 u_space = np.arange(1.0, u_max + step, step) omega = np.zeros_like(u_space)# Base case for delay-differential equation mask_1_2 = (u_space >=1.0) & (u_space <=2.0) omega[mask_1_2] =1.0/ u_space[mask_1_2]# Integrate: (u*w(u))' = w(u-1)for i inrange(len(u_space)): u = u_space[i]if u >2.0: idx_minus_1 =int((u -1.0-1.0) / step) integral = np.trapezoid(omega[:idx_minus_1+1], u_space[:idx_minus_1+1]) omega[i] = (1.0+ integral) / u omega_func = interp1d(u_space, omega, kind='cubic')# --- 2. Generate Comparison DataFrame --- gamma =0.5772156649 exp_neg_gamma = np.exp(-gamma) results = []for k inrange(1, max_k +1): u = total_digits / k w_u =float(omega_func(u)) ln_B = k * np.log(10)# Buchstab Split Prob (Exact) prob_no_factor_b = w_u / (k * np.log(10)) prob_split_buchstab =1.0- prob_no_factor_b# Mertens Split Prob (Approximation)# Prob(no factor <= B) ~ e^-gamma / ln(B) prob_no_factor_m = exp_neg_gamma / ln_B prob_split_mertens =1.0- prob_no_factor_m results.append({"k_digits": k,"u_scale": round(u, 2),"prob_split_buchstab":round(max(0, prob_split_buchstab) *100, 2),"prob_split_mertens":round(max(0, prob_split_mertens) *100, 2),"1/4k-rule": round(100* (1-.25/ k), 2) })return pd.DataFrame(results).set_index('k_digits')# Executedf_comp = compute_comparison_table()GT(df_comp.loc[[1,2,3,4,5,6,7,8,9,10,15,20,25,30,35,40,45,50]])

Table 1: Merten’s approximation for the difficulty of splitting an 100-digit number. Percent of numbers with \(k\)-digit smallest factor.

k_digits

u_scale

prob_split_buchstab

prob_split_mertens

1/4k-rule

1

100.00

75.62

75.62

75.00

2

50.00

87.81

87.81

87.50

3

33.33

91.87

91.87

91.67

4

25.00

93.90

93.90

93.75

5

20.00

95.12

95.12

95.00

6

16.67

95.94

95.94

95.83

7

14.29

96.52

96.52

96.43

8

12.50

96.95

96.95

96.88

9

11.11

97.29

97.29

97.22

10

10.00

97.56

97.56

97.50

15

6.67

98.37

98.37

98.33

20

5.00

98.78

98.78

98.75

25

4.00

99.02

99.02

99.00

30

3.33

99.19

99.19

99.17

35

2.86

99.30

99.30

99.29

40

2.50

99.39

99.39

99.38

45

2.22

99.48

99.46

99.44

50

2.00

99.57

99.51

99.50

From 40 Quadrillion Years to Less Than Two Days

In 1977, the architects of the RSA algorithm—Ron Rivest, Adi Shamir, and Leonard Adleman—published a challenge in Scientific American that seemed mathematically invincible. They presented a 129-digit number (RSA-129) and a secret message, with Rivest famously predicting that factoring it would take 40 quadrillion years (\(4\times 10^{16}\) years) based on the technology and known world of the 1970s. This wasn’t a casual guess; it was a calculation based on the best algorithms of the era, like Pollard’s rho and Continued Fractions (CFRAC), which scaled poorly as numbers grew. The $100 prize they offered felt like a safe bet for a task meant to outlast the sun.

The “aeons” prediction collapsed in just 17 years, primarily due to a double-exponential explosion in both mathematics and infrastructure Table 2. The first blow came from algorithmic shifts: in 1981, Carl Pomerance invented the Quadratic Sieve (QS), moving the complexity of factoring from nearly exponential to sub-exponential. While Rivest modeled a world of linear improvements, mathematicians found a shortcut that effectively changed the “physics” of the problem. By the time a global team tackled the challenge in 1993, they weren’t using the tools Rivest had measured; they were using the Multiple Polynomial Quadratic Sieve (MPQS), a far more efficient “industrial factory” for manufacturing the mathematical relations needed to break the code.

The final nail in the coffin was the birth of distributed computing via the nascent Internet. Instead of a single supercomputer, the 1994 team coordinated 600 volunteers across 20 countries, harnessing the idle cycles of 1,600 workstations (and even a few fax machines). This collective effort provided approximately 5,000 MIPS-years of compute, finishing the job in just eight months of wall-clock time. When the message was finally decrypted, it revealed the famous phrase: “The Magic Words are Squeamish Ossifrage.” Today, the Industrial transition is complete—a modern implementation like CADO-NFS can factor that same RSA-129 modulus on a commercial cloud instance in less than 24 hours for the price of a decent lunch.

Table 2: The RSA-129 speedup: decomposing the “40 quadrillion year” gap.

Factor

Magnitude of Gain

Description

Algorithm (Mathematics)

~\(10^7\) to \(10^9\)

Transitioned from CFRAC to the Quadratic Sieve (QS) and MPQS. This shifted the problem from near-exponential to sub-exponential complexity (\(L_n[1/2, c]\)).

Hardware (Moore's Law)

~\(10^4\)

Desktop clock speeds moved from ~1 MHz (1977) to ~100 MHz (1994), alongside significantly more efficient 32-bit and 64-bit architectures.

Networking (Distribution)

~\(10^3\)

Instead of one supercomputer, the 1994 team used the early Internet to coordinate 600 volunteers and 1,600 workstations.

RSA-129 Video

Here is a link to the Numberphile Video RSA-129, with Ron Rivest. He says several interesting things (edited for continuity):

[T]he security of the cryptographic scheme that we proposed depends on the assumptions that nobody should be able to find out a \(p\) and a \(q\) from the product \(pq\).

But there’s a missing piece then, and still now, to some extent. We don’t really know how hard factoring the products of two large prime numbers is.

And so we set out a challenge. And the goal there was just to learn about the difficulty of factoring. It was poorly studied at the time.

So with RSA-129, it sat there for quite a while. But then in ’94 a team of researchers came up with a factorization.

And that was what a shock to us.

You can sort of predict the growth of technology. So the the speed of computers you can predict. The amount of, the number of computers you can get together to work on a problem you can predict pretty well. What you don’t know how to predict so well is the better, the improvement in algorithms. And that’s really what the key was here. Better algorithms.

At the time I thought it was probably, and maybe still is, the cheapest purchase of lots and lots of computer time ever.

The RSA-129 Challenge

RSA-129 =

114381625757888867669235779976146612010218296

721242362562561842935706935245733897830597123

563958705058989075147599290026879543541

=

349052951084765094914784961990389813341776463

8493387843990820577 × 32769132993266709549961

988190834461413177642967992942539798288533

Info:Complete Factorization / Discrete logarithm / Class group:

Total cpu/elapsed time for entire Complete Factorization 904171/132448 [1d 12:47:28]

Info:root: Cleaning up computation data in /tmp/cado.pc47pzv7

3490529510847650949147849619903898133417764638493387843990820577

32769132993266709549961988190834461413177642967992942539798288533

CADU-NFS ran RSA-129 in 132,448 seconds (about 37 hours) on my Studio II (Intel i7-7820HQ@2.9GHz 4 cores/ 32GB RAM). The gap from 40 quadrillion years (\(4 \times 10^{16}\) years) down to 37 hours (\(4.2 \times 10^{-3}\) years) is a speedup factor of approximately \(10^{19}\).

Table 3: The RSA-129 speedup: decomposing the “40 quadrillion year” gap.

Component

Gain Factor

Narrative Logic

Clock Frequency

\(3,500\times\)

1 MHz (1977 baseline) to 3.5 GHz (2026).

Core Parallelism

\(6.8\times\)

Moving from a single-threaded VAX to 8 active cores (utilization adjusted).

Architecture (IPC)

\(\approx 4\times\)

Superscalar execution (multiple instructions per cycle) vs. 1970s multi-cycle ops.

Word Size and AVX2

\(\approx 8\times\)

64-bit native math + 256-bit SIMD processing chunks of the modulus at once.

HARDWARE SUBTOTAL

\(\approx 7.6 \times 10^5\)

Brute-force gain. Without better math: 50 billion years.

ALGORITHM (NFS)

\(\approx 1.2 \times 10^{13}\)

The jump from \(L_n[1/2]\) (CFRAC) to \(L_n[1/3]\) (GNFS).

TOTAL SPEEDUP

\(\approx 9.5 \times 10^{18}\)

Bridge from 40 quadrillion years to 37 hours.

The algorithmic speedup roughly splits into complexity class vs. implementation. \(L_n[\alpha, c] = \exp(c (\ln n)^\alpha (\ln \ln n)^{1-\alpha})\), \(\ln n = 129 \ln 10 \approx 297.03\), and \(\ln \ln n = \ln(297.03) \approx 5.69\).

Complexity Class (\(\alpha\)): Shifting the “dial” from \(1/2\) to \(1/3\) provides the first \(\approx 3.2 \times 10^7\) speedup.

Implementation/residual (\(o(1)\)): The remaining \(\approx 4 \times 10^5\) algorithmic gain comes from 50 years of refining the “Sieve” mechanics—machine-tuned assembly, better polynomial selection, and the highly optimized matrix solvers in CADO-NFS that weren’t even theoretically fully described in 1977.

Expectations of a Random Integer

Before we can master the “How” of factorization, we must understand the “What.” When we reach into the infinite bag of integers and pull one out, we aren’t just grabbing a needle in a haystack; we are grabbing a structured object governed by the laws of probabilistic number theory.

The Density of Primes

The journey begins with a binary question: Is it prime? The Prime Number Theorem (PNT) tells us that the probability an integer \(X\) chosen uniformly at random up to \(N\) is prime is \(\approx 1/\ln N\). For an \(n\)-digit number (\(N = 10^n\)), this probability scales linearly with the number of digits: \[\Pr(X \text{ is prime}) \approx \frac{1}{\ln(10^n)} = \frac{1}{n \ln 10} \approx \frac{0.434}{n}\]

For a 100-digit number, you’ll find a prime roughly every 230 integers. This is the first wall any factorization utility hits. If you don’t check for primality first, you might spend a billion years trying to find factors that don’t exist.

How Many Factors? The Erdős–Kac Theorem

If the number isn’t prime, how many factors should we expect? A common intuition is that a 100-digit number must have dozens of prime factors. The truth, provided by the Erdős–Kac Theorem, is far more sparse.

Erdős–Kac is essentially the Central Limit Theorem for prime factors. It states that the number of distinct prime factors \(\omega(X)\) of a random integer \(X \le N\) follows a normal distribution: \[\frac{\omega(X) - \ln \ln N}{\sqrt{\ln \ln N}} \sim \mathcal{N}(0, 1)\]

This leads to a startling realization: the average number of prime factors grows with the logarithm of the number of digits. For a 100-digit number: \(N = 10^{100}\), \(\ln N \approx 230.25\) and \(\ln \ln N \approx 5.44\). The average 100-digit number has only 5 or 6 distinct prime factors.

Precision Counting: The Sathe-Selberg Formula

Erdős–Kac is an asymptotic result. For precise probabilities of having exactly\(k\) factors, we turn to the Sathe-Selberg formula. This formula allows us to estimate the count of \(k\)-factor integers \(\pi_k(x)\) as: \[\pi_k(x) \sim \frac{x}{\ln x} \frac{(\ln \ln x)^{k-1}}{(k-1)!} G\left(\frac{k-1}{\ln \ln x}\right)\] where \(G(z)\) is an analytic function defined by: \[G(z) = \frac{1}{\Gamma(z+1)} \prod_p \left(1 + \frac{z}{p-1}\right) \left(1 - \frac{1}{p}\right)^z\]\(G(z)\) is slowly varying. If \(k \approx \ln \ln N\) (the average case), then \(z \approx 1\) and \(G(1) = 1\). \(G\) matters in the tail of the distribution.

For small \(k\), this formula reveals that the distribution is Poisson-like. As \(k\) moves far from \(\ln \ln N\), the density of drops off exponentially. Why does the distribution of prime factors look so much like a Poisson distribution?

If you view the existence of each prime factor as an independent event with probability \(1/p\), then the expected number of factors for \(X \le N\) is the sum of these probabilities: \[\sum_{p \le N} \frac{1}{p} \approx \ln \ln N\]

This \(\lambda = \ln \ln N\) becomes the intensity of our factor-finding process. The Sathe-Selberg formula is essentially a Poisson distribution with a corrective number-theoretic layer \(G(z)\):

As long as you are investigating numbers near the average—the typical random integer—then \(G(z) \approx 1\), and you can rely on the Poisson approximation to give you accurate probabilities. It is only in the tails of the distribution (like seeking primes or numbers with an extreme abundance of factors) where the prime product \(G(z)\) exerts its influence.

The Poisson approximation of Sathe-Selberg for the density of \(k\)-factor integers writes \[\text{Density} \approx \frac{1}{\ln N} \frac{(\ln \ln N)^{k-1}}{(k-1)!}\] with \(\lambda = \ln \ln N\) and \(m = k-1\). The Poisson formula gives \[\Pr(k-1) = \frac{(\ln \ln N)^{k-1} e^{-\ln \ln N}}{(k-1)!}\] Since \(e^{-\ln \ln N} = \frac{1}{\ln N}\), the Poisson probability for \(k-1\) events is identical to the leading term of the Sathe-Selberg formula.

Smoothness and the Dickman-de Bruijn Function

In the factorization business, the most important property is smoothness. We say an integer is \(y\)-smooth if all its prime factors are \(\le y\). The probability that a random number is \(y\)-smooth is given by the Dickman function \(\rho(u)\), where \(u = \ln X / \ln y\) is the ratio of total digits to smooth digits.

The function is defined by the delay-differential equation: \[u\rho'(u) + \rho(u-1) = 0, \text{ with } \rho(u)=1 \text{ for } 0 \le u \le 1\]

As \(u\) increases, the probability of smoothness plummets.

For small \(u\) (e.g., \(u=2\)): Roughly 30% of numbers are \(N^{1/2}\)-smooth. These are the balanced numbers (like RSA keys) and they are relatively common.

Large \(u\) (e.g., \(u=10\)): Only 1 in \(10^{13}\) numbers are \(N^{1/10}\)-smooth. If you’re looking for a 10-digit factor in a 100-digit number, you are fighting these odds.

The Largest Prime Factor: Golomb–Dickman

If we pick a random number and factor it completely, what is the size of the boss—the largest prime factor \(P_1(X)\)?

The expected fraction of digits of the largest prime factor relative to the total digits of \(N\) is the Golomb–Dickman constant: \[G = \int_0^\infty \rho(u) du \approx 0.624329...\]

This means that for your random 100-digit number, you should expect the largest prime factor to be roughly 62 digits long. This constant is a fundamental limit on why we need sophisticated sieving like QS or GNFS—because trial division will never reach that 62-digit titan.

The Decay Table

Table 4 shows how the probability of finding a \(\le B\)-smooth 100-digit number \(N\) decays as \(B\) gets smaller. This is the probability that none of the prime factors of \(N\) exceed the bound \(B\). \(u = \ln N / \ln B\) is the ratio of total digits to smooth digits.

Table 4: Dickman’s decay table: the probability of finding a \(B\)-smooth 100-digit number.

\(u\)

\(B\) (Smoothness)

\(\rho(u) \approx\)

The Factorizer's Reality

1

\(10^{100}\)

\(1.0\)

Every number is \(N\)-smooth.

1.47

\(10^{68}\)

\(0.5\)

Median, 50% of random numbers have Boss \(\le 68\) digits

1.6

\(10^{62.5}\)

\(0.44\)

Mean, Golomb–Dickman average Boss size

2

\(10^{50}\)

\(0.306\)

Balanced semiprimes live here. Hard for Pollard.

3

\(10^{33}\)

\(0.048\)

ECM starts to excel here.

4

\(10^{25}\)

\(0.0049\)

Pollard's Rho finds these in minutes.

6

\(10^{16}\)

\(1.9 \times 10^{-5}\)

Rare easy numbers; Rho finds these in seconds.

10

\(10^{10}\)

\(2.7 \times 10^{-13}\)

Virtually impossible to be this smooth.

Appreciating this table is the transition between Dark Ages guesswork and modern algorithmic strategy.

The Dark Ages and the Foundation of Magic

Before we can appreciate the Magic of sub-exponential algorithms, we must understand why the old ways fail and the mathematical bedrock upon which modern factorizers are built.

The Complexity of the Dark Ages

The most primitive method of factorization is Trial Division: checking every integer \(d \in [2, \sqrt{N}]\) to see if \(d\) divides \(N\). (Or every \(d\) so that \(d<N/d\) to avoid computing an “expensive” square-root as my 1970s CS teacher explained!)

The complexity is the tragedy here. If \(N\) has \(n\) digits, trial division is \(O(\sqrt{10^n}) = O(10^{n/2})\). This is exponential complexity in terms of the input size \(n\).

Consider a 100-digit RSA-style semiprime: \(\sqrt{N} = 10^{50}\). Even if your computer could perform \(10^{15}\) divisions per second (a massive supercomputer cluster), it would take \(\approx 3.17 \times 10^{27}\) years to find the factor.

The Dark Ages aren’t just slow; they are physically impossible for the numbers that secure our modern world.

Magic #0: The Greatest Common Divisor (GCD)

If there is a patron saint of factorization, it is Euclid. The Euclidean Algorithm is Magic #0 because it allows us to find the shared factors between two numbers without factoring either of them.

The algorithm relies on the observation that \(\gcd(a, b) = \gcd(b, a \pmod b)\). By repeatedly applying this, we reduce the problem to a series of remainders.

The complexity of GCD is \(O((\ln N)^2)\), which is polynomial time in the number of digits. It is blazingly fast. In every advanced algorithm (Pollard’s \(\rho\), ECM, QS), the final step is almost always a GCD. We use complex math to generate a number \(X\) that we hope shares a factor with \(N\), and then we let Euclid do the final pluck.

Magic #1: Binary Exponentiation

Many primality tests and factorization steps require us to calculate \(a^E \pmod N\), where \(E\) might be as large as \(N\) itself. Calculating this by multiplying \(a\) by itself \(E\) times would take us right back to the Dark Ages.

The Magic: We use the binary representation of \(E\).

Square: Generate a sequence \(a^1, a^2, a^4, a^8, a^{16} \dots \pmod N\) by repeatedly squaring the previous result.

Multiply: Combine only the powers where the corresponding bit in \(E\) is 1.

For a 100-digit \(E\), we only need \(\approx 333\) squarings and at most 333 multiplications. This reduces a lifetime of the universe calculation to a few microseconds. Without binary exponentiation, even the simplest primality test would be unusable.

Primality Testing

Before we spend a single CPU cycle trying to find a factor, we must ensure the number isn’t prime. In the modern era, we don’t look for factors to prove primality; we look for witnesses to compositeness.

Fermat’s Little Theorem

Fermat gave us the first great Gatekeeper: If \(p\) is prime, then for any \(1 < a < p\): \[a^{p-1} \equiv 1 \pmod p\] If we find an \(a\) such that \(a^{N-1} \not\equiv 1 \pmod N\), then \(N\) is definitely composite. If the congruence holds, \(N\) is a probable prime.

The Flaw: Carmichael numbers (like 561). These composite numbers satisfy the Fermat test for all bases \(a\). We need a stronger gatekeeper.

Magic #2: The Miller-Rabin Test

Miller-Rabin refines Fermat’s idea by exploiting the fact that in a prime field, the only square roots of \(1\) are \(1\) and \(-1\). Consider \(N = 15\), where \(4^2 = 16 \equiv 1 \pmod{15}\). Thus, \(4\) is a square root of 1 that is neither \(1\) nor \(-1\). This is a non-trivial square root and shows \(N\) is not prime. In general if \(a^2\equiv 1\pmod{p}\) then \(p\mid (a-1)(a+1)\) and if \(p\) is prime it must divide \(a-1\) or \(a+1\).

For an odd \(N\), we write \(N-1 = 2^s \cdot d\). We then look at the sequence: \[a^d, a^{2d}, a^{4d}, \dots, a^{2^s d} \pmod N\] If \(N\) is prime, this sequence must either start with \(1\), or it must hit \(-1\) (\(N-1\)) at some point before it reaches \(1\). If it reaches \(1\) without having hit \(-1\) just before it, we have found a non-trivial square root of 1, which is a mathematical proof that \(N\) is composite.

Example 1 (Miller-Rabin in Python) This implementation uses pow(a, d, n) (which uses Binary Exponentiation under the hood) and returns a pandas DataFrame to visualize the witness process. Note the use of Python for ... and else which runs if the loop break is not called.

Code

from sympy import nextprimeimport randomdef miller_rabin(n, k=20, verbose=False, small_primes=True):""" Industrial-grade Probabilistic Primality Test. k = number of rounds (bases to test). Cohen recommends 20 passes. """if n <2: returnFalse, Noneif n ==2or n ==3: returnTrue, Noneif n %2==0: returnFalse, Noneif small_primes:# Small prime trial division (cheap and helpful) small_primes = (5, 7, 11, 13, 17, 19, 23, 29, 31, 37)for p in small_primes:if n == p:returnTrue, pif n % p ==0:returnFalse, p# Step 1: Write n-1 as 2^s * d d = n -1 s =0while d %2==0: d //=2 s +=1# GPT came up with this clever but impenetrable code# Write n-1 = 2^s * d with d odd# d = n - 1# s = (d & -d).bit_length() - 1 # v2(d)# d >>= s history = []for i inrange(k): a = random.randint(2, n -2) x =pow(a, d, n) iteration_data = {'n': n,'d': d,'s': s,"iteration": i +1,"base_a": a,"initial_x": x,"outcome": ("Probable Prime"if x ==1or x == n-1else"Checking S-steps") }if x ==1or x == n -1:if verbose: history.append(iteration_data)continue# Repeat squaring to find -1for r inrange(1, s): x =pow(x, 2, n)if x == n -1: iteration_data["outcome"] =\f"Probable Prime (found -1 at s={r})"# break skips else belowbreakelse:# If we never hit -1 (no break), it's definitely composite iteration_data["outcome"] ="COMPOSITE"if verbose: history.append(iteration_data)returnFalse, pd.DataFrame(history)returnFalse, Noneif verbose: history.append(iteration_data)returnTrue, pd.DataFrame(history) if verbose elseNonen =1230310p1, p2 = nextprime(n), nextprime(n +100)res, df = miller_rabin(p1, 20, True, False)GT(df, show_index=False)

Magic #3: Fermat Factorization and Why “Balanced” Primes are a Security Risk

In most introductory math classes, we’re taught that factoring large numbers is “hard.” But it is easy to factor a number with two prime factors that are very close in size. The method? Fermat’s Factorization Method.

Pierre de Fermat realized that every odd number \(N\) can be represented as the difference of two squares \[

N = x^2 - y^2.

\] If you can find \(x\) and \(y\), then by the basic difference-of-squares identity, you have your factors: \[

N = (x - y)(x + y).

\] For any \(N = pq\), the specific \(x\) and \(y\) are \(x = \dfrac{p+q}{2}\) (the average) and \(y = \dfrac{p-q}{2}\) (the distance from the average)

Fermat’s method doesn’t try to divide \(N\) by \(3, 5, 7, \dots\). Instead, it starts at the ceiling of the square root of \(N\) (\(\lceil\sqrt{N}\rceil\)) and moves upward, looking for a perfect square.Calculate \(x = \lceil\sqrt{N}\rceil\). Check if \(x^2 - N\) is a perfect square (let’s call it \(y^2\)). If it is, you’ve found the factors: \((x-y)\) and \((x+y)\). If not, increment \(x\) by 1 and repeat.

It makes sense to run a limited number of Fermat iterations (usually a few thousand) specifically to catch “close” primes. If \(x^2 - N\) isn’t a square within that limit, assume the primes aren’t close enough and move on.

The Fermat method is why modern cryptography standards (like FIPS 186-4) require that the two primes \(p\) and \(q\) for an RSA key must stay within a specific range: they must be large enough to resist the Quadratic Sieve, but different enough in magnitude to ensure Fermat’s method would take billions of years to bridge the gap.

Magic #4: Pollard’s Rho or The Birthday Problem at Work

If the number passes our primality tests and is confirmed composite, we don’t jump straight to the heavy sieving algorithms. We first try to catch mid-sized factors using the Birthday Problem logic of Pollard’s \(\rho\) method.

The core intuition is the collision trick, aka Birthday problem. Imagine you are in a room with \(N\) people. To find someone with a specific birthday (say, January 1st), you need to ask nearly 365 people. This is Trial Division logic—it’s linear.

But to find any two people who share a birthday, you only need 23 people. This is the Birthday Problem. In factorization, we don’t look for a factor \(p\); we look for two numbers \(x\) and \(y\) such that \(x \equiv y \pmod{p}\).

The algorithm uses a random walk in a small ring: collisions are inevitable. We use a pseudorandom function, usually \(f(x) = (x^2 + c) \pmod{N}\), to generate a sequence.

The sequence is calculated modulo \(N\) (the big number).

However, it effectively shadows a sequence modulo \(p\) (the smaller, unknown factor).

Because \(p < N\), a collision \(x_i \equiv x_j \pmod{p}\) will happen much faster—specifically in \(O(\sqrt{p})\) steps.

Once we have a collision modulo \(p\), then \(p\) divides the difference \(|x_i - x_j|\). We use Magic #0 (GCD) to extract it: \[g = \gcd(|x_i - x_j|, N)\]

To find this collision without storing every \(x\) in memory, we use two pointers in a method called Floyd’s circle-finding. The Tortoise moves one step at a time, and the Hare moves two steps. In a finite set, the sequence eventually enters a cycle, forming the shape of the Greek letter \(\rho\). The Hare will eventually lap the Tortoise inside the cycle modulo \(p\).

Example 2 (Pollard’s Rho in Python)

Code

import mathdef pollard_rho(n, c=1):""" Pollard's Rho algorithm with Floyd's cycle-finding. Returns a factor of n. With verbose reporting. """if n %2==0: return2def f(x): return (x*x + c) % n ans = [] x =2; y =2; d =1 ans.append([x, y, d])while d ==1: x = f(x) # Tortoise moves 1 step y = f(f(y)) # Hare moves 2 steps d = math.gcd(abs(x - y), n) ans.append([x, y, d])if d == n:# Failure: hit a collision mod n, try a different constant creturn pollard_rho(n, c +1) df = pd.DataFrame(ans, columns=['Tortoise', 'Hare', 'gcd']) df.index.name ='iteration'return d, dfn =1000p1, p2 = nextprime(n), nextprime(n +100)factor, df = pollard_rho(p1 * p2)print(p1, p2, p1*p2)GT(df)

1009 1103 1112927

Table 5: Pollard’s rho with known composite number plucks out the factor 1103.

iteration

Tortoise

Hare

gcd

0

2

2

1

1

5

26

1

2

26

458,330

1

3

677

764,859

1

4

458,330

802,316

1

5

304,724

1,027,643

1

6

764,859

604,183

1

7

325,259

89,551

1

8

802,316

502,705

1

9

664,619

598,112

1

10

1,027,643

22,905

1

11

382,712

594,941

1

12

604,183

848,352

1

13

380,271

894,883

1

14

89,551

1,026,003

1

15

742,567

703,264

1

16

502,705

819,979

1

17

1,096,063

972,193

1

18

598,112

778,065

1

19

935,519

547,538

1

20

22,905

582,834

1

21

450,409

889,468

1

22

594,941

869,614

1

23

603,329

107,441

1

24

848,352

1,072,566

1

25

161,107

7,068

1

26

894,883

867,408

1

27

57,424

942,412

1

28

1,026,003

224,359

1

29

120,374

654,529

1

30

703,264

862,996

1

31

1,059,532

301,569

1

32

819,979

1,000,871

1,103

The Power: For a factor \(p \approx 10^{14}\), trial division needs \(10^{14}\) steps. Pollard’s \(\rho\) needs \(\approx \sqrt{10^{14}} = 10^7\) steps. On a modern CPU, this is instantaneous.

Magic #5: Pollard’s \(p-1\) Method

If Pollard’s \(\rho\) is a random walk, the \(p-1\) method is a targeted strike. It is incredibly fast, but it has a very specific weakness: it only works if the number \(N\) has a prime factor \(p\) such that the number \(p-1\) is smooth.

Recall Fermat’s Little Theorem: If \(p\) is prime, then for any \(a\) not divisible by \(p\): \[a^{p-1} \equiv 1 \pmod p\] Now, suppose \(p\) is a factor of our composite \(N\). If \(p-1\) is smooth, it means \(p-1\) is composed of small primes. Therefore, \(p-1\) will divide some large factorial \(B!\) (or a product of small prime powers). Let’s call this large number \(K\).

If \((p-1) \mid K\), then by Fermat’s theorem: \[a^K = a^{(p-1) \cdot \text{something}} = (a^{p-1})^{\text{something}} \equiv 1^{\text{something}} \equiv 1 \pmod p\] If \(a^K \equiv 1 \pmod p\), then \(p\) divides the difference \((a^K - 1)\). Since \(p\) also divides \(N\), we can use our favorite foundation, Magic #0 (GCD), to find it: \[g = \gcd(a^K - 1, N)\] If \(g\) is between \(1\) and \(N\), we have successfully plucked the prime \(p\) out of \(N\).

The \(p-1\) method is a one-shot deal for a specific factor \(p\).

If \(p-1\) is smooth: The algorithm finds \(p\) almost instantly using binary exponentiation and one GCD.

If \(p-1\) is NOT smooth: The algorithm fails completely. There is no way to tweak it to make it work for that specific \(p\).

Pollard’s method is extended by using elliptic curves where we aren’t stuck with the fixed value group size \(p-1\). By changing the elliptic curve, we effectively change the target from \(p-1\) to a random group order \(g\) in the Hasse interval \([p+1-2\sqrt{p}, p+1+2\sqrt{p}]\). If \(p-1\) isn’t smooth, maybe \(g\) is!

Example 3 (Python Implementation: Pollard’s \(p-1\).) Here is a simple version that uses a factorial-based \(K\).

Listing 1

Code

def pollard_p_minus_1(n, B=10000):""" Attempts to find a factor of n using Pollard's p-1 method. B is the smoothness bound. """ a =2# Usually start with base 2# Compute a^(B!) mod n efficiently# Instead of computing B!, we just do successive powersfor j inrange(2, B +1): a =pow(a, j, n)print(j, a) g = math.gcd(a -1, n)if1< g < n:return g # Success!else:returnNone# Failure: p-1 was not B-smoothn =31*71print(n)ans = pollard_p_minus_1(31*71, B=5)print(ans)

2201

2 4

3 64

4 1194

5 1954

31

Magic #6: The Elliptic Curve Method (ECM)

When Pollard’s \(\rho\) reaches its limit (usually factors around 15–20 digits), we bring in ECM. Invented by Hendrik Lenstra, ECM is the first algorithm whose complexity depends on the size of the smallest factor \(p\) rather than the size of \(N\), but it does so in a way that is much more flexible than \(\rho\). ECM is a generalization of Pollard’s \(p-1\) algorithm. In the \(p-1\) method, we hope that \(p-1\) is smooth (composed of small primes). If it isn’t, the algorithm fails. In ECM, we replace the multiplicative group \((\mathbb{Z}/p\mathbb{Z})^\times\) with the group of points on an elliptic curve \(E\) over \(\mathbb{F}_p\).

The order (number of points) of the group is \(g = |E(\mathbb{F}_p)|\).

By Hasse’s Theorem, this order is always in the range \([p+1-2\sqrt{p}, p+1+2\sqrt{p}]\).

The magic is that if one curve doesn’t have a smooth order, we simply pick a new curve with different parameters \(a\) and \(b\). Each curve gives us a new chance to hit a smooth group order.

Pick a random curve \(E\) and a point \(P\) on it.

Attempt to compute \(Q = kP\), where \(k\) is a product of many small primes.

In the process of point addition, we must perform a modular inverse. This inverse is calculated using the Extended Euclidean Algorithm.

If the inverse fails because the number isn’t coprime to \(N\), the GCD will suddenly reveal the factor \(p\).

While ECM is primarily a factorizer, it is also the basis for ECPP (Elliptic Curve Primality Proving).

To prove \(p\) is prime, we find a curve whose order \(m\) has a large prime factor \(q > (\sqrt{p}+1)^2\).

If we can show a point \(P\) has order \(q\) in \(E(\mathbb{F}_p)\), then \(p\) is prime.

This is recursive: we then have to prove \(q\) is prime, and so on, until we hit a small prime.

ECM is an excellent mid-game strategy. You can run hundreds or thousands of curves (curvesets) to find factors up to 40 or 50 digits before finally giving up and moving to the boss level: the Sieve.

Here are some details. An elliptic curve is defined by the Weierstrass equation: \[y^2 = x^3 + ax + b\] The parameters \(a\) and \(b\) are coefficients chosen from \(\mathbb{Z}/N\mathbb{Z}\). For the curve to be non-singular (smooth), we require the discriminant \(\Delta = 4a^3 + 27b^2 \not\equiv 0 \pmod N\).

If \(p\) is a prime divisor of \(N\), then any calculation we do modulo \(N\) is also happening hidden modulo \(p\). There is a natural projection (homomorphism): \[\phi: E(\mathbb{Z}/N\mathbb{Z}) \to E(\mathbb{F}_p).\] We used this in Pollard’s \(\rho\) method too.

When we add points on a curve modulo \(N\), we are simultaneously adding points on a shadow curve over the finite field \(\mathbb{F}_p\). The number of points on this shadow curve is the group order \(g = |E(\mathbb{F}_p)|\). By Hasse’s Theorem, this order \(g\) is always near \(p\): \[g \in [p+1-2\sqrt{p}, \ p+1+2\sqrt{p}]\]

ECM is a generalization of Pollard’s \(p-1\) algorithm. In the \(p-1\) method, we need the integer \(p-1\) to be smooth (composed of small primes). If it isn’t, the algorithm is stuck. The Magic of ECM is to find a smooth group order \(g\) for our chosen curve \(E\). If it isn’t, we don’t give up—we just change \(a\) and \(b\) to create a completely different curve with a new group order \(g'\). Because \(g'\) is a different random integer in the Hasse interval, we get a fresh roll of the dice to hit a smooth number.

To find the factor, we pick a point \(P\) on the curve and attempt to compute: \[Q = kP \pmod N\] where \(k\) is a massive integer (the product of all primes up to some bound \(B\)). If \(g\) is smooth and \(g\) divides \(k\), then in the shadow world modulo \(p\), the result should be the identity (the Point at Infinity (\(\mathcal{O}\))). How does this reveal \(p\) to us in the real world modulo \(N\)? Point addition on a curve involves calculating a slope \(\lambda\). For two points \((x_1, y_1)\) and \((x_2, y_2)\), the slope is: \[\lambda = \frac{y_2 - y_1}{x_2 - x_1} \pmod N\] To compute this, we must find the modular inverse of \((x_2 - x_1) \pmod N\). We use the Extended Euclidean Algorithm to find \(inv\) such that: \[inv \cdot (x_2 - x_1) \equiv 1 \pmod N.\] If \(\gcd(x_2 - x_1, N) = p\), the modular inverse does not exist. The Euclidean Algorithm will fail to find an inverse and will instead hand you the factor \(p\). Thus, ECM is effectively a search for a curve where the point addition crashes because it hits a multiple of \(p\).

YAFU launches thousands of curves in parallel.

Small \(a, b\): It starts with simple parameters.

Large Primes: It uses a Two-Stage process. Stage 1 looks for points that are totally smooth. Stage 2 looks for points that are smooth except for one large prime factor, significantly increasing the success rate.

Continued Fraction Method

The Continued Fraction Method (CFRAC), pioneered by Morrison and Brillhart in the early 1970s, was the first true “modern” factoring algorithm to bridge the gap between simple trial division and the sophisticated sieving techniques you are running now. The core insight of CFRAC is Factorization via Fermat’s Difference of Squares: finding \(x, y\) such that \(x^2 \equiv y^2 \pmod N\). To do this, we look for values \(Q_i = x_i^2 \pmod N\) that are smooth (their prime factors are all within our small Factor Base).The probability of a number being smooth increases dramatically as the number gets smaller. If we pick a random \(x\), \(x^2 \pmod N\) can be as large as \(N\). This is a “needle in a haystack” problem.

If \(A_k/B_k\) is the \(k\)-th convergent of the continued fraction expansion of \(\sqrt{N}\), it satisfies \[

A_k^2 - N B_k^2 = Q_k,

\] which implies \[

A_k^2 \equiv Q_k \pmod N.

\] The beauty of continued fractions is that for these specific \(A_k\) values, the remainder \(Q_k\) is bounded \[

|Q_k| < 2\sqrt{N}.

\] Instead of searching through a space of size \(N\), we are searching through a space of size \(2\sqrt{N}\). For a 100-digit number, this reduces the search space from \(10^{100}\) to roughly \(10^{50}\)—a massive algorithmic “win.”

While CFRAC uses the “best rational approximations” to guarantee small \(Q_k\) values, it generates them sequentially. The Quadratic Sieve (QS), which superseded it, realizes that we don’t need the absolute “best” approximations; we just need “good enough” ones that can be calculated in parallel. QS uses a polynomial \(f(x) = (x + \lceil\sqrt{N}\rceil)^2 - N\), where the values are still roughly \(O(\sqrt{N})\), but can be discovered via a Sieve of Eratosthenes-style sweep across an interval.

While it was the first algorithm to successfully factor a 50-digit Fermat number (\(F_7\)) in 1970, its complexity of \(L_n[1/2, \sqrt{2}]\) made it the bottleneck in Rivest’s 1977 prediction. It was essentially the “manual prototype” for the Quadratic Sieve; where CFRAC generates potential squares one-by-one through convergents, the Quadratic Sieve “manufactures” them in bulk through a much more efficient parallelizable sieving process.

Remark 1 (\(L\)-notation). \(L\)-notation is the “Richter scale” of computational number theory. It categorizes algorithms that sit in the awkward, massive gap between polynomial time (fast) and fully exponential time (slow).

The general form is: \[

L_n[\alpha, c] = e^{(c + o(1))(\ln n)^\alpha (\ln \ln n)^{1-\alpha}}.

\] For example, \(L_n[1/2, \sqrt{2}]\), means:

\(\alpha\) value (between 0 and 1) determines the “shape” of the complexity curve:

If \(\alpha = 0\): The formula simplifies to a power of \(\ln n\), which is polynomial time (like addition or multiplication).

If \(\alpha = 1\): The formula simplifies to a power of \(n\), which is fully exponential time (like trial division).

\(\alpha = 1/2\): This is the signature of the Continued Fraction Method (CFRAC) and the Quadratic Sieve (QS). It means the algorithm is “sub-exponential”—faster than trial division, but still significantly slower than polynomial time.

The value \(c = \sqrt{2} \approx 1.414\) is the specific “work factor” for the Continued Fraction Method. Different algorithms have different constants. For example, the Quadratic Sieve has \(c = 1\). Since \(c\) is in the exponent, that move from \(\sqrt{2}\) to \(1\) represents a massive increase in speed as \(n\) grows. CADO-NFS uses the General Number Field Sieve (GNFS), which drops \(\alpha\) from \(1/2\) to 1/3. This is why GNFS dominates for 100+ digit numbers; the “curve” is fundamentally flatter.

The \(o(1)\) is standard asymptotic notation meaning “some terms that go to zero as \(n\) goes to infinity.” such s as the “startup overhead” of the algorithm. For small \(n\), these terms matter; for your 100-digit number, they are negligible.

When Rivest made his prediction, he was looking at the \(L_n[1/2, \sqrt{2}]\) curve. Because the Quadratic Sieve improved the constant \(c\), and the Number Field Sieve later improved the \(\alpha\) to \(1/3\), the actual time required to factor the number “slid” down to a much lower complexity curve.

\(L\) terms for standard methods:

Trial Division \(L_n[1, c]\), exponential spped

CFRAC / QS \(L_n[1/2, c]\), sub-exponential

GNFS (CADO) \(L_n[1/3, c]\), sub-exponential, more overhead.

Magic #7: The SIQS and The Industrial Revolution of Factorization

When you are faced with a hard number—a product of two similarly sized primes, like an RSA key—Pollard’s \(\rho\) and ECM will eventually hit a wall. To break through, we need the Self-Initializing Quadratic Sieve (SIQS). This is not a random walk; it is an assembly line designed to build a solution.

The objective is based on Fermat’s Factorization Method. If we can find integers \(x\) and \(y\) such that: \[x^2 \equiv y^2 \pmod N\] Then \(N\) must divide \(x^2 - y^2 = (x-y)(x+y)\). Unless \(x \equiv \pm y \pmod N\), calculating \(\gcd(x-y, N)\) will yield a non-trivial factor. Finding such a pair by accident is impossible. SIQS builds them by combining smooth numbers.

A quadratic residue \(a \pmod p\) is a number that has a square root in the field \(\mathbb{F}_p\). In the Sieve, we only care about primes \(p\) where \(N\) is a quadratic residue. We collect these primes into our Factor Base.

Why? Because we are going to look for values of \(a\) such that \(Q(a) = a^2 - N\) is smooth over this factor base. If \(N\) is not a residue mod \(p\), then \(p\) can never divide \(a^2 - N\), making it useless for our construction.

A sieve is the heart of the Magic. Instead of performing trial division on millions of \(Q(a)\) values, we use a Sieve Array.

Initialize: Create an array where each index \(i\) represents a value \(a_i\). Store the approximate logarithm \(\log(Q(a_i))\) in each cell.

Sieve: For each prime \(p\) in the factor base, solve the congruence \(a_i^2 \equiv N \pmod p\) to find the roots \(r_1, r_2\).

Jump: Starting at \(r_1\) and \(r_2\), jump through the array in steps of \(p\), subtracting \(\log(p)\) from each cell you land on.

Harvest: After processing all primes, any cell that is near zero represents a \(Q(a_i)\) that is almost certainly a product of the small primes in our factor base.

Once we have more smooth Relations than we have primes in our factor base, we represent each relation as a vector of exponents modulo 2.

A product of numbers is a perfect square if all its prime exponents are even.

We use Gaussian Elimination (or the Block Lanczos algorithm in YAFU) to find a subset of rows that sum to the zero vector modulo 2.

This sum of rows corresponds to a product of relations that forms our perfect square \(y^2\). We take the square root, calculate the GCD, and the Dark Ages number is cracked.

Magic #8: The General Number Field Sieve (GNFS)

The Quadratic Sieve (\(O(e^{\sqrt{\ln n \ln \ln n}})\)) is limited because the numbers we are sieving (\(a^2 - n\)) grow too large. The Number Field Sieve (NFS) breaks this barrier by moving the problem out of the standard integers (\(\mathbb{Z}\)) and into Algebraic Number Fields.

In QS, we look for squares in one world: \(\mathbb{Z}/n\mathbb{Z}\). In GNFS, we build two different mathematical worlds (rings) and a map between them:

The Rational World: The standard integers \(\mathbb{Z}\).

The Algebraic World: A ring of algebraic integers \(\mathbb{Z}[\alpha]\), defined by a polynomial \(f(x)\) of degree \(d\) (usually 5 or 6).

We choose a polynomial \(f(x)\) and an integer \(m\) such that \(f(m) \equiv 0 \pmod n\). This creates a homomorphism \(\phi\) that maps the algebraic world back to the integers mod \(n\).

The goal is still to find \(x^2 \equiv y^2 \pmod n\). In GNFS, we look for pairs \((a, b)\) such that:

In the Rational World, the linear value \(a - bm\) is smooth.

In the Algebraic World, the algebraic number \(a - b\alpha\) has a smooth norm.

Because we are sieving over two different structures simultaneously, the numbers we need to check stay significantly smaller than \(a^2 - n\) in the Quadratic Sieve. Smaller numbers are exponentially more likely to be smooth (the Dickman function works in our favor here!).

CADO-NFS is the specialized open-source suite used for these massive computations. Its magic lies in its orchestration:

Polynomial Selection: Finding the perfect \(f(x)\) to keep the norms as small as possible.

Sieving (The Monster): This stage can run for months across thousands of CPUs. It uses a Lattice Sieve to find \((a, b)\) pairs where both worlds are smooth.

Filtering: Throwing away redundant relations.

The Matrix: Solving a linear system with hundreds of millions of rows.

Square Root: Calculating the square root in the algebraic number field (a notoriously difficult task).

The complexity of GNFS is: \[L_n[1/3, c] = e^{(c + o(1))(\ln n)^{1/3}(\ln \ln n)^{2/3}}\] Notice the \(1/3\) exponent. This is the holy grail. It means that as \(n\) grows, the difficulty of factoring with GNFS increases much more slowly than with the Quadratic Sieve. This \(1/3\) vs. \(1/2\) shift is why we can factor 250-digit numbers today instead of waiting for the heat death of the universe.

YAFU: The Modern Orchestrator

YAFU (Yet Another Factorization Utility) is the gold standard general-purpose factorization routine. It is not just an implementation of these algorithms; it is a highly adaptive expert system. It analyzes the input and launches a multi-stage attack:

Pre-Sieve: It clears out the first 600,000 primes (up to 10M) using a fast bit-sieve.

Rho-Stage: It runs several walks of Pollard’s \(\rho\) to catch the easy fruit. Run for 1 minute to find anything up to 15 digits.

ECM-Stage: It launches curvesets. If it finds a factor, it restarts the logic on the remaining composite part.

SIQS-Stage: If the number is still standing (usually when it’s a balanced semiprime), YAFU initializes the Sieve.

Bail to CADU-NFS: If the number is still unfactored and over 120 digits, YAFU will generate a CADO-NFS compatible file and suggest you move to a cluster.

The reason this pipeline works is because of the Dickman Function. We start with algorithms that look for Small Factors (Rho, ECM) because small factors are statistically much more likely to exist. We only move to the Global Sieve (SIQS, GNFS) once we have a high degree of confidence that no small factors are left to find.

Transition from the Quadratic Sieve to the Number Field Sieve: Below 100 digits SIQS is faster. The overhead of choosing a polynomial and setting up the two-world map in GNFS is too high. SIQS’s simpler one-world approach wins on sheer speed per iteration. Above 120 digits GNFS is the undisputed king. Because of the \(1/3\) exponent in its complexity, it scales much better. Even though each GNFS step is harder, you need far fewer relations to finish the job.

The magic you feel when using YAFU is the result of thousands of hours of optimization:

SIMD Parallelism: The sieving code is written in assembly/intrinsics to use AVX-512 instructions, processing multiple array cells at once.

Self-Initialization: In the SIQS, it changes the polynomial \(Q(a)\) frequently to keep the values in the array small, maximizing the probability of smoothness (thanks to the Dickman function - less likely to be smooth further from \(\sqrt{N}\)). This is called the Multiple Polynomial Quadratic Sieve (MPQS).

The Matrix Solver: It uses the Block Lanczos algorithm, which can solve a matrix with millions of rows in seconds using sparse matrix techniques.

Table 6: YAFU strategy by factor size.

Algorithm

Ideal Factor Size, \(p\)

Input Size \(N\)

Complexity \(O(f)\)

Hand-off Logic

Trial Division

1–6 digits

Any

\(O(B)\)

Always first. Clears the grass (tiny primes).

Pollard’s \(\rho\)

7–15 digits

Any

\(O(\sqrt{p})\)

Effortless. If no factor appears in ~10M iterations, move on.

ECM

15–40 digits

Any

\(O(e^{\sqrt{2 \ln p \ln \ln p}})\)

The Fisherman. Runs curves until the cost of a new curve > cost of SIQS.

SIQS

Balanced

50–110 digits

\(O(e^{\sqrt{\ln N \ln \ln N}})\)

The Factory. Best when \(N\) is a product of two similar primes.

GNFS

Balanced

110+ digits

\(O(e^{\sqrt[3]{\ln N (\ln \ln N)^2}})\)

The Super-Factory. Handed off to CADO-NFS for massive distributed runs.

Future Magic: The Quantum Leap and Shor’s Algorithm

We’ve moved from the linear slog of the Dark Ages to the logarithmic elegance of modern number theory. We use the Birthday Problem to find collisions, Elliptic Curves to find smooth group orders, and high-dimensional linear algebra to weave perfect squares out of smooth straw.

The next time you see YAFU factor a 100-digit number in minutes, remember: it isn’t just calculating—it’s exploiting the deep, probabilistic architecture of the integers. But there’s another revolution around the corner.

Just as we mastered the Industrial Age of factoring with the Quadratic Sieve, the horizon shifted. Enter Shor’s Algorithm (1994). This is the Nuclear Option of number theory. While the Best Classical Algorithm (GNFS) is sub-exponential, Shor’s is polynomial time (\(O(\log^3 N)\)).

Peter Shor realized that the problem of factoring an integer \(N\) can be reduced to the problem of finding the period (the order) of a function.

If you pick a random \(a < N\), the function \(f(x) = a^x \pmod N\) is periodic. Let \(r\) be the smallest integer such that: \[a^r \equiv 1 \pmod N\] If we can find this period \(r\), and if \(r\) is even, we can write: \[(a^{r/2})^2 - 1 \equiv 0 \pmod N\]\[(a^{r/2} - 1)(a^{r/2} + 1) \equiv 0 \pmod N\] And just like in our Quadratic Sieve, the factors of \(N\) are found by \(\gcd(a^{r/2} \pm 1, N)\).

On a classical computer, finding the period \(r\) is just as hard as factoring itself (you’d have to calculate \(a^x\) until it repeats, which is the Dark Ages all over again). Shor’s Algorithm uses two quantum superpowers to find \(r\) instantly:

Quantum Superposition: The computer creates a superposition of all possible values of \(x\). It calculates \(f(x) = a^x \pmod N\) for all\(x\) simultaneously in a single quantum state.

Quantum Fourier Transform (QFT): This is the magic. The QFT is used to measure the interference pattern of the periodic function. Just as a prism splits light into its frequencies, the QFT reveals the period \(r\) by collapsing the superposition into a state that represents the frequency of the function’s repetition.

If Shor’s is so fast (\(O(\log^3 N)\)), why isn’t RSA dead? The catch is decoherence.

Scaling: To factor an \(n\)-bit number, you need roughly \(2n\) logical qubits. For a 2048-bit RSA key, that’s 4096 perfect qubits.

Error Correction: Because quantum states are fragile (decoherence), we need thousands of physical qubits to create one logical qubit. Current quantum computers (like Google’s Sycamore or IBM’s Osprey) are still in the hundreds of noisy physical qubits range.

Shor’s algorithm proves that the security of modern encryption isn’t based on a mathematical impossibility, but on a hardware limitation. We are currently in the NISQ (Noisy Intermediate-Scale Quantum) era. When Fault-Tolerant quantum computers arrive, the Dark Ages won’t just be over—the current age of RSA and Elliptic Curves will become history.

Postscript: Primes as Effectively Hyperuniform Systems

While this post treats primes as pseudo-random entities to be hunted and factored, there is a burgeoning field of research that views the primes through the lens of Statistical Mechanics and Scattering Theory.

There is hidden order in the prime forest. The paper Uncovering Multiscale Order in the Prime Numbers via Scattering,(Torquato et al. 2018) treats prime numbers along the number line as a collection of atoms. When you perform a scattering experiment on these atoms (essentially a Fourier transform of the prime locations), you don’t see the chaotic noise of a random gas. Instead, you see a pattern called Effective Hyperuniformity.

Primes are not perfectly periodic like a crystal (which would make factoring trivial), but they are not disordered like a Poisson process. They exist in a state of constrained disorder. The scattering pattern of primes shows peaks (similar to how X-rays interact with crystals) at all rational frequencies. This suggests that the primes are aware of the sieve structure at every scale simultaneously. These are called Bragg-like Peaks. There is an apparent multiscale order: while local gaps between primes look random (the Dark Ages view), the global distribution of primes is incredibly rigid.

For the factorizer, this is a reminder that the Magic we use—the GCD, the Quadratic Sieve, the QFT—is only possible because we are exploiting a deep, multiscale harmony that persists despite the apparent chaos of the number line.

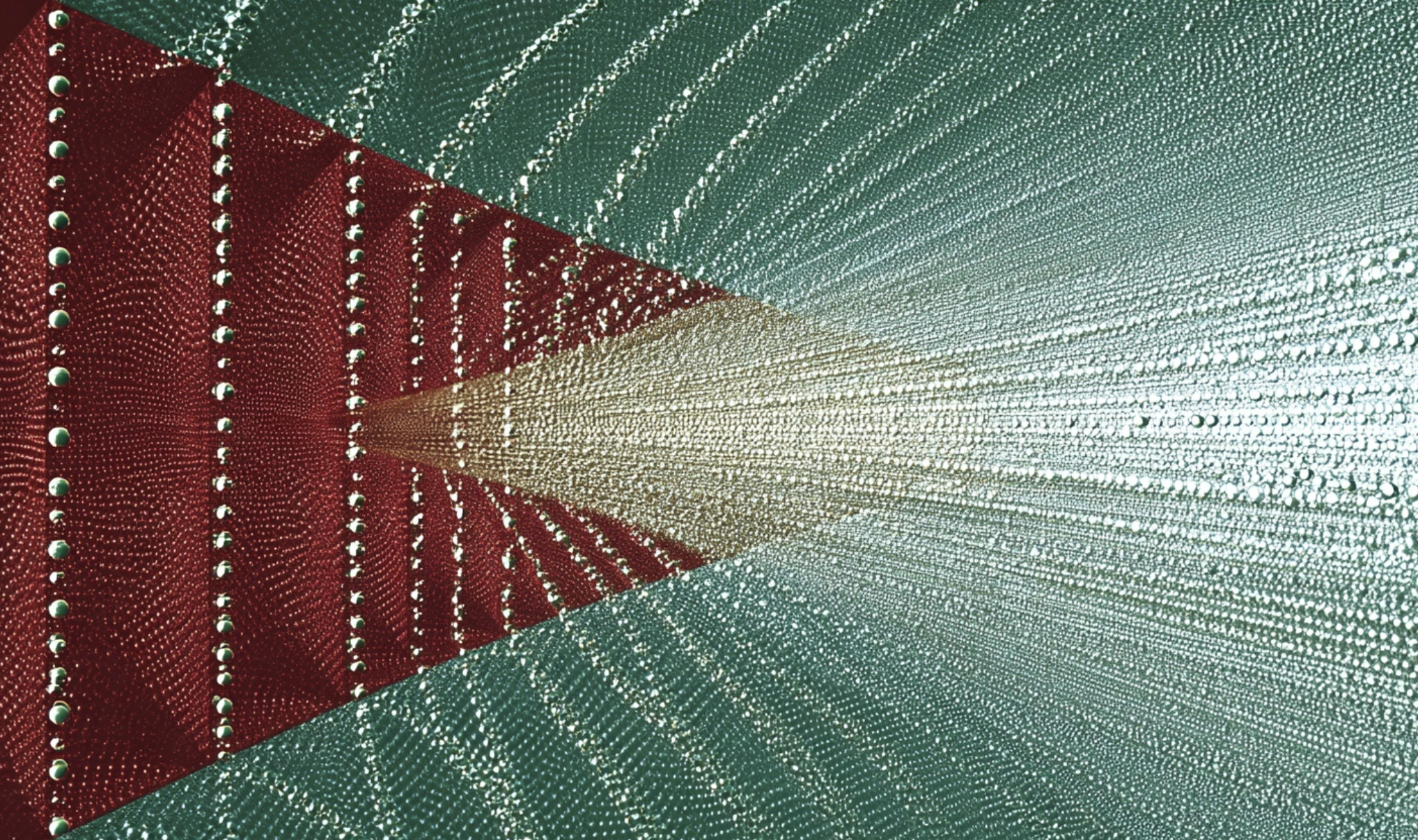

Example 4Figure 1 shows the scattering intensity of primes. It reveals that prime numbers are not merely a sequence of pseudo-random gaps, but rather a structure characterized by Effective Hyperuniformity. In physics, this pattern is analogous to a Quasicrystal: a state of matter that lacks a simple repeating lattice but still produces sharp, discrete diffraction peaks (Bragg peaks) under a Fourier transform.

The blue spikes are the structural resonances of the Sieve of Eratosthenes. When you “sieve out” multiples of \(p\), you create a periodic void in the number line with frequency \(1/p\). Because this happens simultaneously for all primes, the resulting spectrum is a dense hierarchy of rational harmonics (\(1/2, 1/3, 2/5, \dots\)). While a random distribution would yield a flat “noise floor” (the red dotted line), the primes concentrate their energy into these specific rational bins. This rigid “diffraction pattern” proves that the primes possess a hidden, long-range order that persists even as they scale toward infinity—a crystalline architecture that modern factoring utilities effectively “tune into” to find their targets.

Code

import matplotlib.pyplot as pltfrom scipy.fft import fft, fftfreqfrom scipy.ndimage import maximum_filter1dfrom scipy.signal import find_peaksfrom fractions import Fraction%config InlineBackend.figure_format ='svg'def generate_automated_prime_scattering(start: int=10**6, length: int=100_000, peak_height=10., inter_peak_dist=100, tol=1e-6, max_denom=100):""" Automatically detects peaks in the scattering spectrum, identifies their rational counterparts, and plots them with standardized downward arrows. """# --- 1. Sieve for Primes --- limit = start + length sieve = np.ones(limit, dtype=bool) sieve[0:2] =Falsefor i inrange(2, int(limit**0.5) +1):if sieve[i]: sieve[i*i::i] =False prime_slice = sieve[start:start+length].astype(float)# --- 2. Random Control --- density = np.mean(prime_slice) random_slice = (np.random.random(length) < density).astype(float)# --- 3. Compute Scattering Intensities ---def get_intensity(signal): sf = fft(signal - np.mean(signal))return np.abs(sf)**2 prime_intensity = get_intensity(prime_slice) rand_intensity = get_intensity(random_slice) freqs = fftfreq(length) half = length //2 x = freqs[1:half] prime_data = prime_intensity[1:half] rand_data = rand_intensity[1:half]# --- 4. Moving Average Max of Control --- rolling_max_control = maximum_filter1d(rand_data, size=500)# --- 5. Automated Peak Detection & Rational Identification ---# Find peaks that are significantly above the noise floor# height is set to a multiple of the mean to catch structural peaks peaks, _ = find_peaks(prime_data, height=np.mean(prime_data) *\ peak_height, distance=inter_peak_dist) discovered_peaks = []for p in peaks: k_val = x[p]# limit_denominator(max_denom) captures structural sieve harmonics# (2, 3, 4, 5, 6, 7, 8, etc.) frac = Fraction(k_val).limit_denominator(max_denom)# Only label if it's very close to the rational (structural peak)ifabs(k_val -float(frac)) < tol: discovered_peaks.append((k_val, prime_data[p], str(frac)))# --- 6. Plotting --- fig, ax = plt.subplots(figsize=(7, 5), layout='constrained') ax.plot(x, prime_data, lw=0.5, label="Prime Scattering I(k)") ax.plot(x, rolling_max_control, color='red', lw=1.2, linestyle=':', label="Random Control Max")# Layout: Define a "ceiling" for the labels to hang from y_max = np.max(prime_data) ceiling = y_max *2# Fixed horizontal line above the data arrow_props =dict(arrowstyle="-|>", ec='grey', fc='grey', lw=.5)# Annotate found peaksfor k_val, p_val, label in discovered_peaks: ax.annotate(f"k={label}", xy=(k_val, p_val), # Peak tip xytext=(k_val, ceiling), # Top of arrow ha='center', va='bottom', fontsize=7, rotation=90, # Vertical text to fit more peaks arrowprops=arrow_props) ax.axhline(np.mean(prime_data) * peak_height, ls='--', lw=1, c='green', label='Peak lower-bound')# --- 7. Final Polish --- ax.set_title(f"Prime Resonances: from {start:,} for {length:,}") ax.set_xlabel("Frequency (k)") ax.set_ylabel("Intensity I(k)") ax.set_yscale('log') ax.set_ylim(bottom=10, top=ceiling *10) # Padding for labels ax.grid(True, which='major', linestyle='-', alpha=0.2) ax.legend(loc='lower left')window =2*3*5*7*11*13generate_automated_prime_scattering(start=10**6, length=window, peak_height=10, inter_peak_dist=200, tol=1e-10, max_denom=window)

Figure 1

Appendix A: Sieve of Eratosthenes and List of Primes

Code

from textwrap import wrapdef get_primes(n: int) ->list[int]:""" Finds all prime numbers up to n using an odds-only Sieve of Eratosthenes. Implementation uses a bytearray for memory efficiency and fast slice assignment. It maps the index i to the odd integer 2i + 1, effectively halving the memory footprint and the number of operations. """if n <2:return []if n ==2:return [2]# size represents the number of odd integers up to n# index i corresponds to the integer 2i + 1 size = (n -1) //2 sieve =bytearray([1]) * (size +1) limit = math.isqrt(n)# We only need to sieve up to sqrt(n)# limit // 2 converts the integer limit to its corresponding index ifor i inrange(1, limit //2+1):if sieve[i]: p =2* i +1# Mark multiples starting from p^2 to avoid redundant work.# The index for p^2 is calculated as (p^2 - 1) / 2 = 2i(i + 1). start =2* i * (i +1)# Calculate the number of multiples to be marked. num_multiples = (size - start) // p +1# Fast slice assignment in C-speed to zero out composites. sieve[start::p] =b'\x00'* num_multiples# Assemble the results: include 2 & convert prime indices to integers.# sieve[0] is skipped because it represents the number 1.return [2] + [2* i +1for i inrange(1, size +1) if sieve[i]]p_list = get_primes(1_000)print(f'{len(p_list):d} Primes to 1_000.')print('\n'.join(wrap(', '.join(map(lambda x: f"{x:d}", p_list)), 60)))print('-'*60)p_list = get_primes(10_000_000)print(f'Primes to 10_000_000: {len(p_list):d}.')

RSA encryption relies on Euler’s Totient Theorem (extending Fermat’s result for primes), which states that if \(\text{gcd}(m, n) = 1\), then \(m^{\phi(n)} \equiv 1 \pmod n\).

Two parties A and B want to communicate. Messages \(M\) are numbers that encode text. For example, we could encode a sentence using A=1, B=2, C=3, etc.

Key generation and setup

Pick two large primes, \(p\) and \(q\).

Compute \(n = p \times q\). This \(n\) is the modulus and is part of the public key.

Compute Euler’s Totient, \(\phi(n) = (p-1)(q-1)\). This represents the count of numbers less than \(n\) that are coprime to \(n\).

Choose a public exponent \(e\). Usually, \(e = 65537\). It must be coprime to \(\phi(n)\).

Compute the private exponent \(d\). This is the modular multiplicative inverse of \(e\) modulo \(\phi(n)\), satisfying: \[

ed \equiv 1 \pmod{\phi(n)}.

\]

Public Key: \((n, e)\)

Private Key: \((d)\)

Encryption: To encrypt a message \(M\), the sender computes: \[

C = M^e \pmod n.

\]

Decryption: To recover the message, the receiver uses the private key \(d\)\[

M = C^d \pmod n.

\] Since \(ed = k\phi(n) + 1\)\[

C^d = (M^e)^d = M^{ed} = M^{k\phi(n) + 1} = (M^{\phi(n)})^k \times M \equiv 1^k \times M \equiv M \pmod n.

\]

An attacker knows \(n\) and \(e\). To find \(d\), they must calculate the modular inverse of \(e\) modulo \(\phi(n)\). However, to calculate \(\phi(n)\), they must know \(p\) and \(q\) which forces the attacker to factor \(n\).

ElGamal public key encryption is similar to RSA. It works as follows. The parties agree to work modulo \(p=47\) and use \(g=5\) as their generator. These are fixed for all messages. A sets up a public key:

A selects a secret private key\(a=10\) and computes \(g^a\pmod{p} = 12\) using binary exponentiation.

A tells B (any anyone else) the public key\(g^a=12\) but not \(a\). The discrete logarithm problem means it is hard for anyone to determine \(a\).

Message coding

B converts their message into number \(M\) between 1 and \(p-2\)

Example: \(M=30\)

B picks a message-specific secret \(k=17\) and computes

\(g^k=38\)

\((g^a)^k=12^{17}=7\)

\(g^{ak}M = 7\times 30 \equiv 22\pmod{p}\)

B sends the message pair \((g^k, g^{ak}M)=(38, 22)\) to A

Message decoding

A, knowing \(a\), can compute \(g^{ak}=(g^k)^a=38^{10}=7\)

A can then compute the reciprocal, \(1/7 = 27\) (\(27\times 7=189 =4\times 47 + 1\)) using the extended Euclidean algorithm

A recovers the message \(M=27\times 22 = 30\)!

Table 7: Who know what during ElGamal.

Step

A Only

B Only

Public

1

\(g=5\), \(p=47\)

2

\(a=10\)

\(g^a=12\)

3

\(M=30\), \(k=17\)

\(g^k=38\), \(Mg^{ak}=22\)

4

\(1/g^{ak}=27\), \(M=30\)

nothing new

Standard ElGamal over integers (like our \(p=47\) example) is rarely used today because the keys have to be just as massive as RSA keys to be secure. However, Elliptic Curve ElGamal (better known as ECDSA or Ed256) is everywhere: WhatsApp/Signal encryption, Bitcoin/Ethereum signatures, Apple/Google Pay, and modern mobile apps. It is used because it is so efficient. A 256-bit Elliptic Curve key provides the same security as a 3072-bit RSA key. This means faster handshakes, less battery drain on your phone, and less data sent over the air. Standard ElGamal is a point moving round a circle; with ECs is a point moving around two interlocked circles in an unpredictable manner. That makes it more secure.

Appendix C: Annotated Sources

Crandall, R., & Pomerance, C. (2005). Prime Numbers: A Computational Perspective. Springer. (Crandall and Pomerance 2005) This is the absolute must-have. It covers everything we discussed: the Dickman function, the transition from Pollard’s Rho to ECM, and the most detailed treatment of the Quadratic Sieve (and GNFS) available in print. It bridges the gap between pure number theory and the dirty implementation details used in YAFU.

Bach, E., & Shallit, J. (1996). Algorithmic Number Theory, Vol. 1: Efficient Algorithms. MIT Press. Why: This is the rigorous computer science view. It provides the complexity analysis for Magic #0 (GCD) and Magic #1 (Miller-Rabin). It’s particularly good for understanding the Big-O limits of the Dark Ages.

Cohen, H. (1993). A Course in Computational Algebraic Number Theory. Springer. (Cohen 1993) Why: Henri Cohen is a legend in the field (one of the creators of the PARI/GP system). This book is excellent for the Non-trivial roots of unity and the Elliptic Curve (ECM) sections. It is mathematically denser than Crandall-Pomerance but very rewarding for a PhD in mathematics.

Nielsen, M. A., & Chuang, I. L. (2010). Quantum Computation and Quantum Information. Cambridge University Press. Why: Known affectionately as “Mike and Ike,” this is the definitive text for Shor’s Algorithm. It explains the Quantum Fourier Transform (QFT) with the Prism clarity we discussed and provides the full proof of how period-finding reduces to factoring.

Crandall, R. E., & Pomerance, C. (2005). Prime numbers: a computational perspective. Springer.

Torquato, S., Zhang, G., & De Courcy-Ireland, M. (2018). Uncovering multiscale order in the prime numbers via scattering. Journal of Statistical Mechanics: Theory and Experiment, 2018(9). https://doi.org/10.1088/1742-5468/aad6be

Source Code